A Crash Course in Docker

In the old days of software development, getting an application from code to production was slow and painful. Developers struggled with dependency hell as test and production environments differ in subtle ways, leading to code mysteriously working on one environment but not the other. Then along came Docker in 2013, originally created within dotCloud as an experiment with container technology to simplify deployment. Docker was open-sourced that March, and over the next 15 months it emerged as a leading container platform.

In this newsletter, we’ll explore the history of container technology, the specific innovations that powered Docker's meteoric rise, and the Linux fundamentals enabling its magic. We’ll explain what Docker images are, how they differ from virtual machines, and whether you need Kubernetes to use Docker effectively. By the end, you’ll understand why Docker has become the standard for packaging and distributing applications in the cloud.

Tracing the Path from Bare Metal to Docker

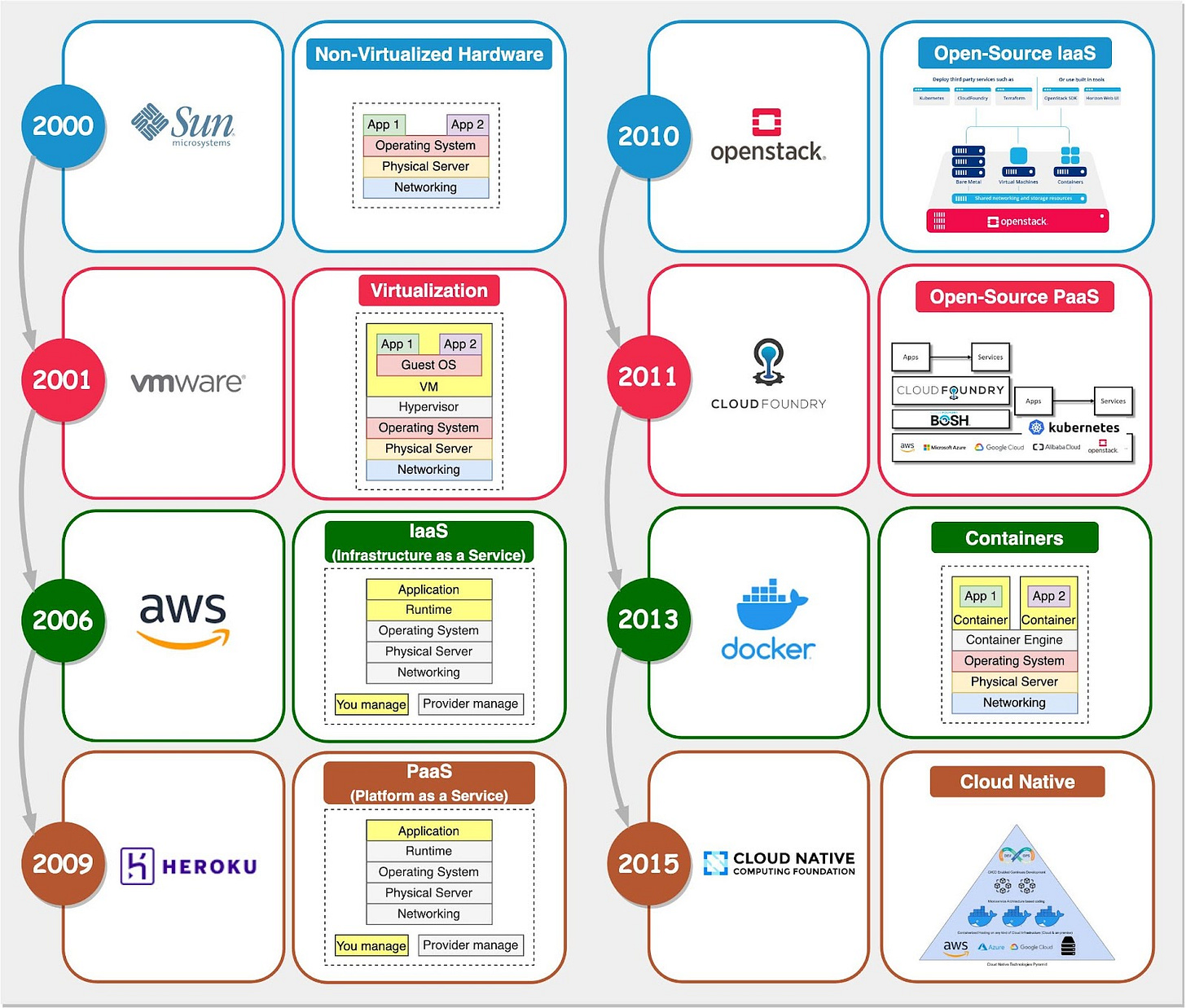

In the past two decades, backend infrastructure evolved rapidly, as illustrated in the timeline below:

In the early days of computing, applications ran directly on physical servers (“bare metal”). Teams purchased, racked, stacked, powered on, and configured every new machine. This was very time-consuming just to get started.

Then came hardware virtualization. It allowed multiple virtual machines to run on a single powerful physical server. This enabled more efficient utilization of resources. But provisioning and managing VMs still required heavy lifting.

Next was infrastructure-as-a-service (IaaS) like Amazon EC2. IaaS removed the need to set up physical hardware and provided on-demand virtual resources. But developers still had to manually configure VMs with libraries, dependencies, etc.

Platform-as-a-service (PaaS) like Cloud Foundry and Heroku was the next big shift. PaaS provides a managed development platform to simplify deployment. But inconsistencies across environments led to “works on my machine” issues.

This brought us to Docker in 2013. Docker improved upon PaaS through two key innovations.

Lightweight Containerization

Container technology is often compared to virtual machines, but they use very different approaches.

A VM hypervisor emulates underlying server hardware such as CPU, memory, and disk, to allow multiple virtual machines to share the same physical resources. It installs guest operating systems on this virtualized hardware. Processes running on the guest OS can’t see the host hardware resources or other VMs.

In contrast, Docker containers share the host operating system kernel. The Docker engine does not virtualize OS resources. Instead, containers achieve isolation through Linux namespaces and control groups (cgroups).

Namespaces provide separation of processes, networking, mounts, and other resources. cgroups limit and meter usage of resources like CPU, memory, and disk I/O for containers. We’ll visit this in more depth later.

This makes containers more lightweight and portable than VMs. Multiple containers can share a host and its resources. They also start much faster since there is no bootup of a full VM OS.

Docker is not “lightweight virtualization” as some would describe it. It uses Linux primitives to isolate processes, not virtualize hardware like a hypervisor. This OS-level isolation is what enables lightweight Docker containers.

Application Packaging

Before Docker’s release in 2013, Cloud Foundry was a widely used open-source PaaS platform. Many companies adopted Cloud Foundry to build their own PaaS offerings.

Compared to IaaS, PaaS improves developer experience by handling deployment and application runtimes. Cloud Foundry provided these key advantages:

Avoiding vendor lock-in - applications built on it were portable across PaaS implementations.

Support for diverse infrastructure environments and scaling needs.

Comprehensive support for major languages like Java, Ruby, and Javascript, and databases like MySQL and PostgreSQL.

A set of packaging and distribution tools for deploying applications

Cloud Foundry relied on Linux containers under the hood to provide isolated application sandbox environments. However, this core container technology powering Cloud Foundry was not exposed as a user-facing feature or highlighted as a key architectural component.

The companies offering Cloud Foundry PaaS solutions overlooked the potential of unlocking containers as a developer tool. They failed to recognize how containers could be transformed from an internal isolation mechanism to an externalized packaging format.

Docker became popular by solving two key PaaS packaging problems with container images:

Bundling the app, configs, dependencies, and OS into a single deployable image

Keeping the local development environment consistent with the cloud runtime environment

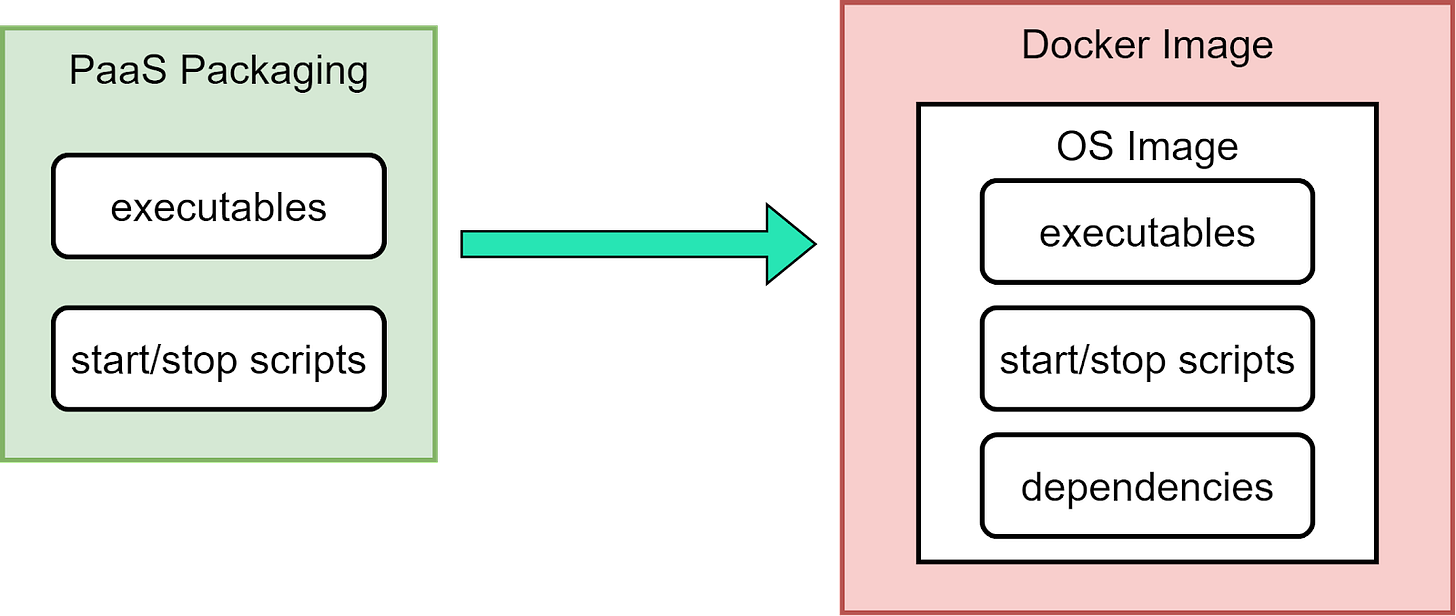

The diagram below shows a comparison.

This elegantly addressed dependency and compatibility issues that plagued PaaS. But Cloud Foundry did not adapt to support Docker images fast enough. This allowed Docker images to proliferate in the cloud computing environment.

From Docker to Kubernetes

Docker won early popularity because it innovated in application packaging and deployment. Its initial success was largely due to this novel method of isolating applications in lightweight containers.

As Docker's popularity grew, the company sought to expand its offerings beyond containerization. It ventured to expand into a full PaaS platform. This led to the development of Docker Swarm for cluster management and the acquisition of Fig (later Docker Compose) to enhance orchestration capabilities.

Docker’s aspirations caught the attention of some tech giants. Companies like Google, RedHat, and other PaaS companies wanted in on this hot new technology.

Let’s see what happened between 2013 and 2018 with the diagram below: