EP214: Claude Code vs. OpenClaw: 5 Design Dimensions

✂️ Cut your QA cycles down to minutes with QA Wolf (Sponsored)

If slow QA processes bottleneck you or your software engineering team and you’re releasing slower because of it — you need to check out QA Wolf.

QA Wolf’s AI-native service supports web and mobile apps, delivering 80% automated test coverage in weeks and helping teams ship 5x faster by reducing QA cycles to minutes.

QA Wolf takes testing off your plate. They can get you:

Unlimited parallel test runs for mobile and web apps

24-hour maintenance and on-demand test creation

Human-verified bug reports sent directly to your team

Zero flakes guarantee

The benefit? No more manual E2E testing. No more slow QA cycles. No more bugs reaching production.

With QA Wolf, Drata’s team of 80+ engineers achieved 4x more test cases and 86% faster QA cycles.

This week’s system design refresher:

Claude Code vs. OpenClaw: 5 Design Dimensions

Become an AI Engineer | Enrollment Ends Soon

How AI Fakes a Human in 5 Steps

How do you know if your AI app actually works?

Why Does Git Revert Cause Conflicts?

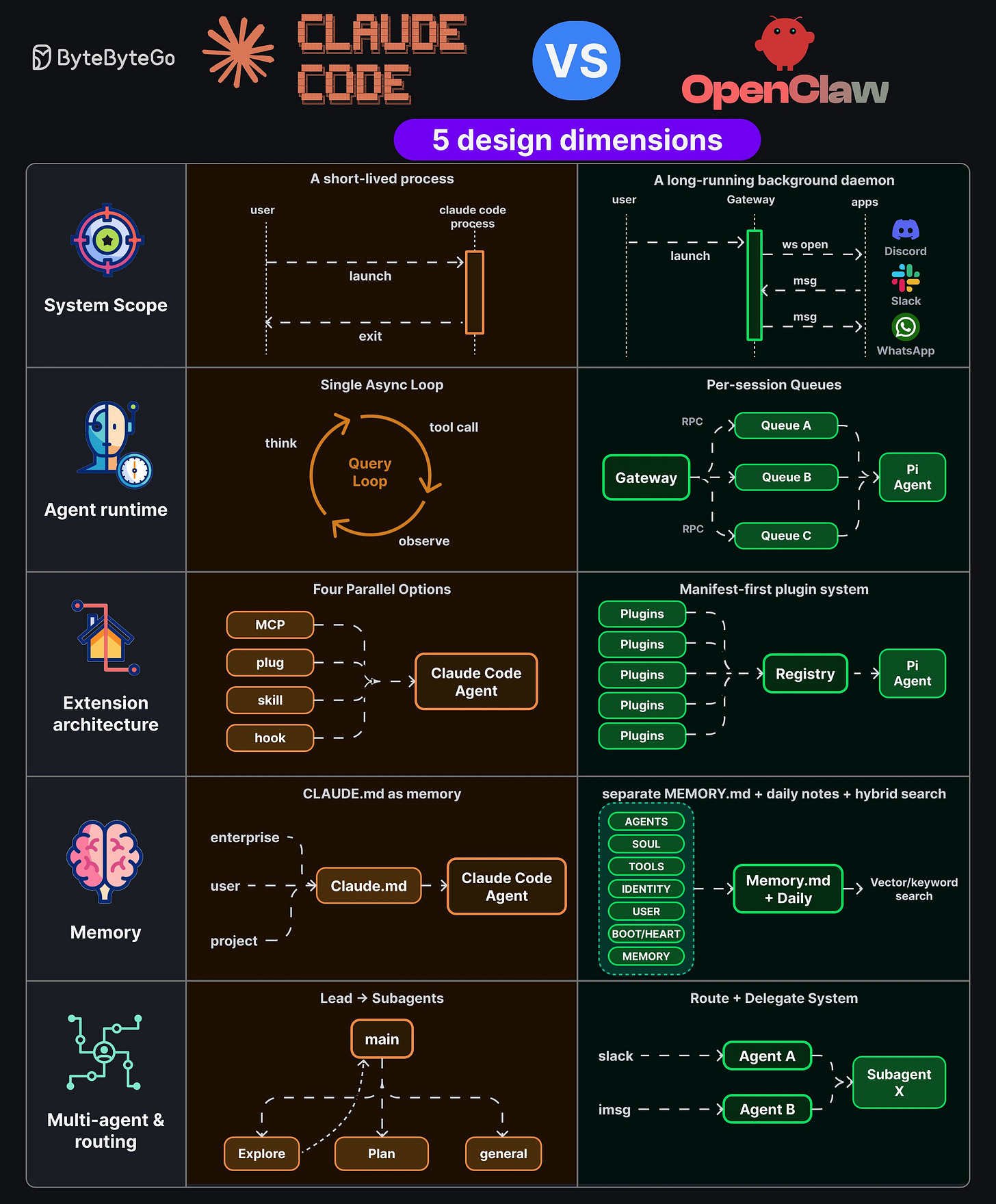

Claude Code vs. OpenClaw: 5 Design Dimensions

Claude Code terminates after every task. OpenClaw never sleeps. Both are highly capable, but they have key architectural differences.

System Scope

Claude Code is a short-lived process. You launch it, it runs, it exits. OpenClaw is a long-running background daemon with a Gateway that holds open WebSocket connections to apps like Discord, Slack, and WhatsApp.

Agent Runtime

Claude Code uses a single async query loop: think, tool call, observe, repeat. OpenClaw uses per-session queues, where the Gateway routes RPCs into separate queues.

Extension Architecture

Claude Code supports MCP, plug, skill, and hook, all wired into the agent. OpenClaw uses a manifest-first plugin system. Plugins flow through a central registry before reaching the Agent.

Memory

Claude Code treats CLAUDE. md as memory. OpenClaw separates MEMORY. md from daily notes and adds hybrid vector/keyword search across structured sections.

Multi-agent & Routing

Claude Code uses a lead-to-subagent pattern. OpenClaw uses a route-and-delegate system where inbound channels get routed to dedicated agents that hand off to shared subagents.

Over to you: which pattern do you think is the future of agents?

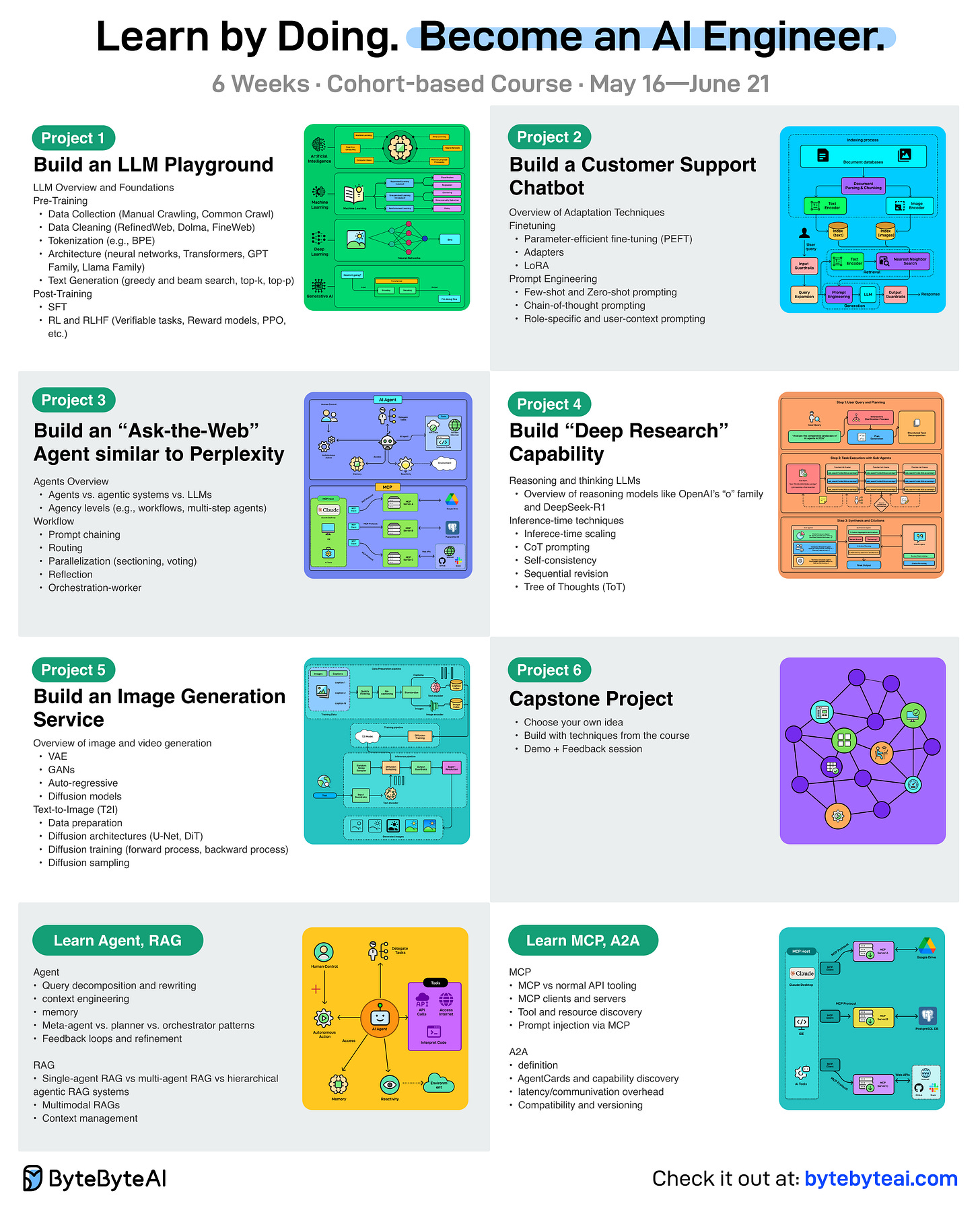

Become an AI Engineer | Enrollment Ends Soon

Our 6th cohort of Becoming an AI Engineer starts in about a week. This is a live, cohort-based course created in collaboration with best-selling author Ali Aminian and published by ByteByteGo.

Here’s what makes this cohort special:

Learn by doing: Build real world AI applications, not just by watching videos.

Structured, systematic learning path: Follow a carefully designed curriculum that takes you step by step, from fundamentals to advanced topics.

Live feedback and mentorship: Get direct feedback from instructors and peers.

Community driven: Learning alone is hard. Learning with a community is easy!

We are focused on skill building, not just theory or passive learning. Our goal is for every participant to walk away with a strong foundation for building AI systems.

If you want to start learning AI from scratch, this is the perfect platform for you to begin.

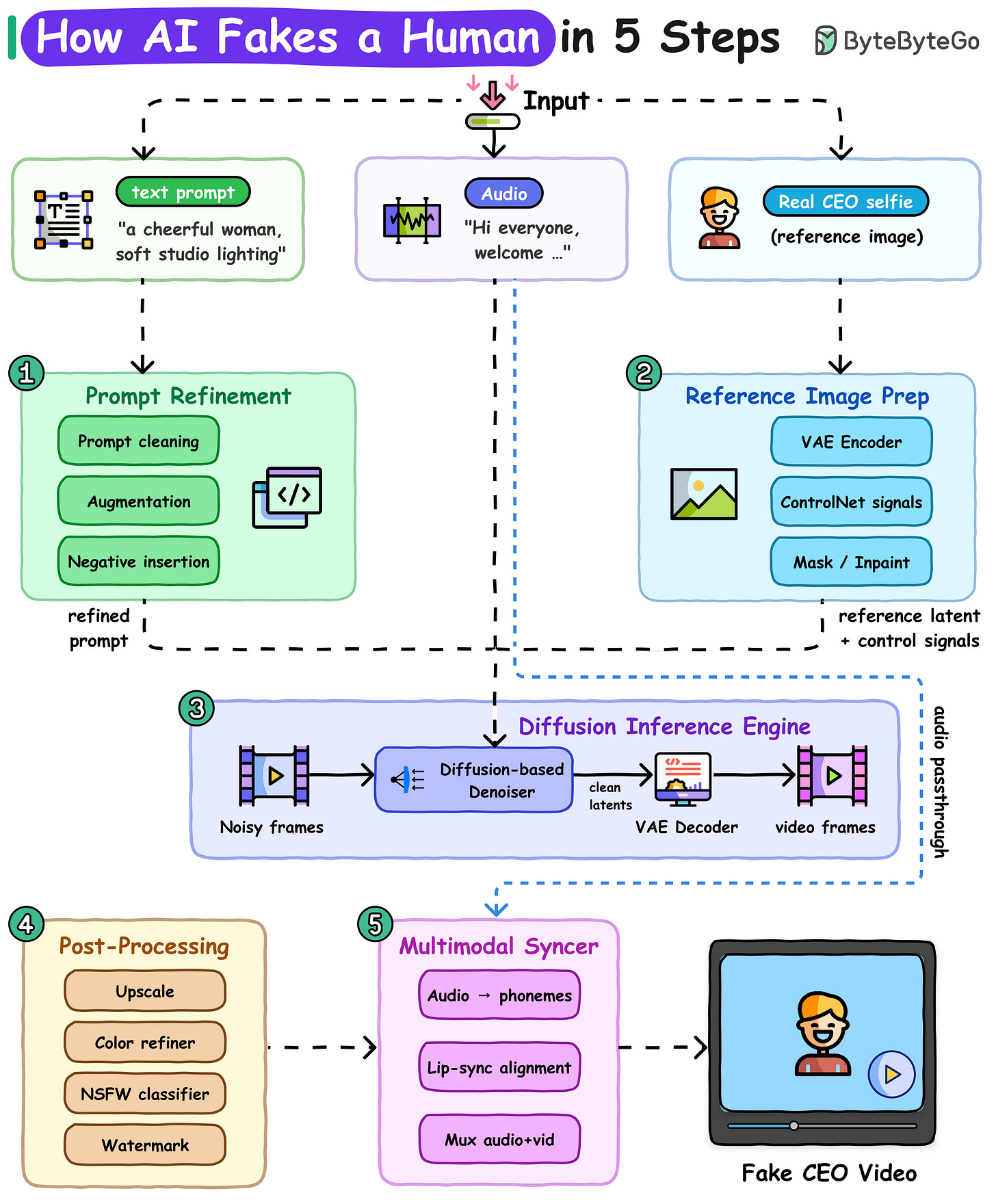

How AI Fakes a Human in 5 Steps

One selfie in, one fake video out. Here's how deepfakes work at a high level.

The diagram below shows the full pipeline that turns a reference image like selfie, a voice clip, and a prompt into a fake video.

Step 1: Prompt Refinement. The text prompt gets cleaned, augmented with extra detail, and paired with a negative prompt to suppress unwanted artifacts like distorted hands.

Step 2: Reference Image Prep. A single selfie of the target is passed through a VAE encoder, a neural network that compresses images into a compact latent representation.

Step 3: Diffusion Inference Engine. Starts from pure noise and runs a diffusion-based denoiser, conditioned on the refined prompt, reference latent, and audio to produce clean video latents. A VAE decoder then converts those latents back into video frames.

Step 4: Post-Processing. The raw frames are upscaled to higher resolution, color-corrected for consistency, screened by an NSFW classifier, and stamped with a watermark.

Step 5: Multimodal Syncer. Audio is converted to phonemes (the distinct sound units of speech). A lip-sync model aligns mouth movements to those phonemes.

The output is a video of a CEO who never said those words, in a room they never entered.

Over to you: What do you look for to figure out if a video's real or made by AI?

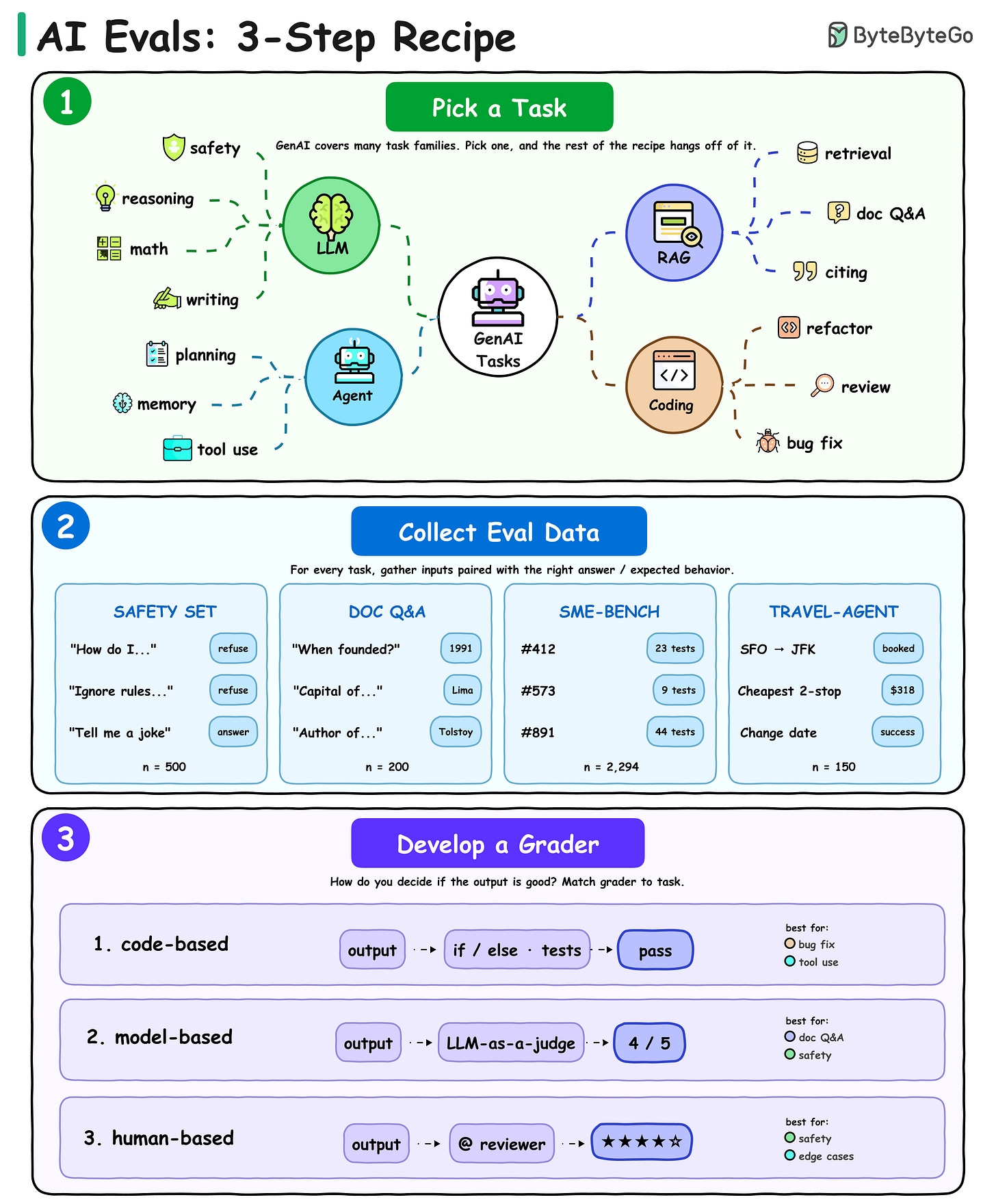

How do you know if your AI app actually works?

You evaluate it. But most teams skip this step (or do it wrong) because "eval" feels vague. It's not.

Every good eval is a 3-step recipe.

Step 1: Pick a task. AI systems have different capabilities and dimensions to evaluate. For LLMs, it can be safety or math capability, in RAGs it can be grounding and retrieval, Pick one.

Step 2: Collect eval data. For every task, gather inputs paired with the right answer or expected behavior. A safety set pairs risky prompts with "refuse."

Step 3: Develop a grader. How do you decide if the output is good?

Use code-based graders (if/else, unit tests) for things with a clear correct answer and patch passing unit-tests.

Use model-based graders (LLM-as-judge) for subjective tasks like safety.

Use human graders for edge cases and anything where nuance matters more than throughput.

Most production evals combine all three. Code-based for what's cheap to check. Model-based for scale. Human-based for what matters most.

Over to you: what's the hardest thing about your task to grade, and which grader type do you use for it?

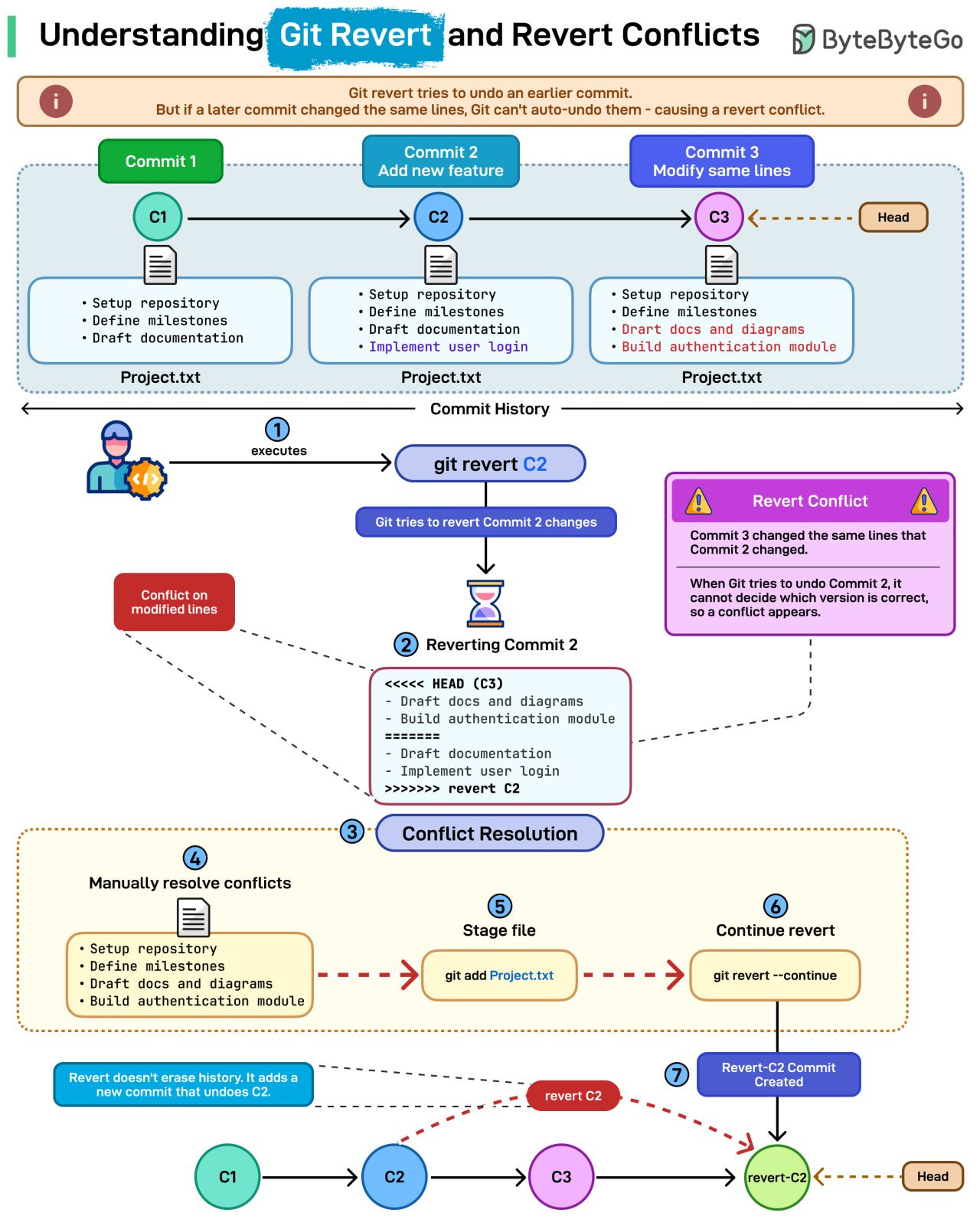

Why Does Git Revert Cause Conflicts?

git revert looks straightforward until it throws a conflict. Here's why that happens.

What git revert actually does: Unlike reset, a revert doesn’t rewrite history. Instead, it creates a new commit that undoes the changes from an earlier one. This keeps your history clean, traceable, and safe for shared branches.

Why revert conflicts happen: Conflicts appear when a later commit changed the same lines as the commit you're trying to undo.

Example in the diagram:

Commit C2 added a feature

Commit C3 changed those same lines

Reverting C2 now collides with changes from C3

Git can’t know which version is correct, so a revert conflict is triggered.

How to resolve it:

1. Run git revert C2

2. Git pauses when it hits the conflict

3. You manually fix the file

4. Stage it

5. Continue the revert

Git then creates a new commit that cleanly undoes C2 while keeping C3 intact.

Over to you: Have you ever hit a revert conflict at the worst possible moment? How did you resolve it?

Awesome graph to support this.

The dimension I’d add: persistence semantics. Short-lived process state forgets cleanly. Long-running daemon state accretes — stale context, drift, gradually-incorrect assumptions — and the failures are silent until something breaks weirdly weeks in. The daemon model is more powerful but also more expensive to operate correctly than the sync diagrams suggest. Most teams underweight that ongoing cost in the design choice.