How Agoda Built a Single Source of Truth for Financial Data

Get first access to the Second Edition of Designing Data-Intensive Applications [O’REILLY] (Sponsored)

Start reading the latest version of the book everyone is talking about.

The second edition of Designing Data-Intensive Applications was just published – with significant revisions for AI and cloud-native!

Martin Kleppmann and Chris Riccomini help you navigate the options and tradeoffs for processing and storing data for data-intensive applications. Whether you’re exploring how to design data-intensive applications from the ground up or looking to optimize an existing real-time system, this guide will help you make the right choices for your application.

Peer under the hood of the systems you already use, and learn how to use and operate them more effectively

Make informed decisions by identifying the strengths and weaknesses of different approaches and technologies

Understand the distributed systems research upon which modern databases are built

Go behind the scenes of major online services and learn from their architectures

We’re offering 3 complete chapters. Start reading it today.

Agoda generates and processes millions of financial data points (sales, costs, revenue, and margins) every day.

These metrics are fundamental to daily operations, reconciliation, general ledger activities, financial planning, and strategic evaluation. They not only enable Agoda to predict and assess financial outcomes but also provide a comprehensive view of the company’s overall financial health.

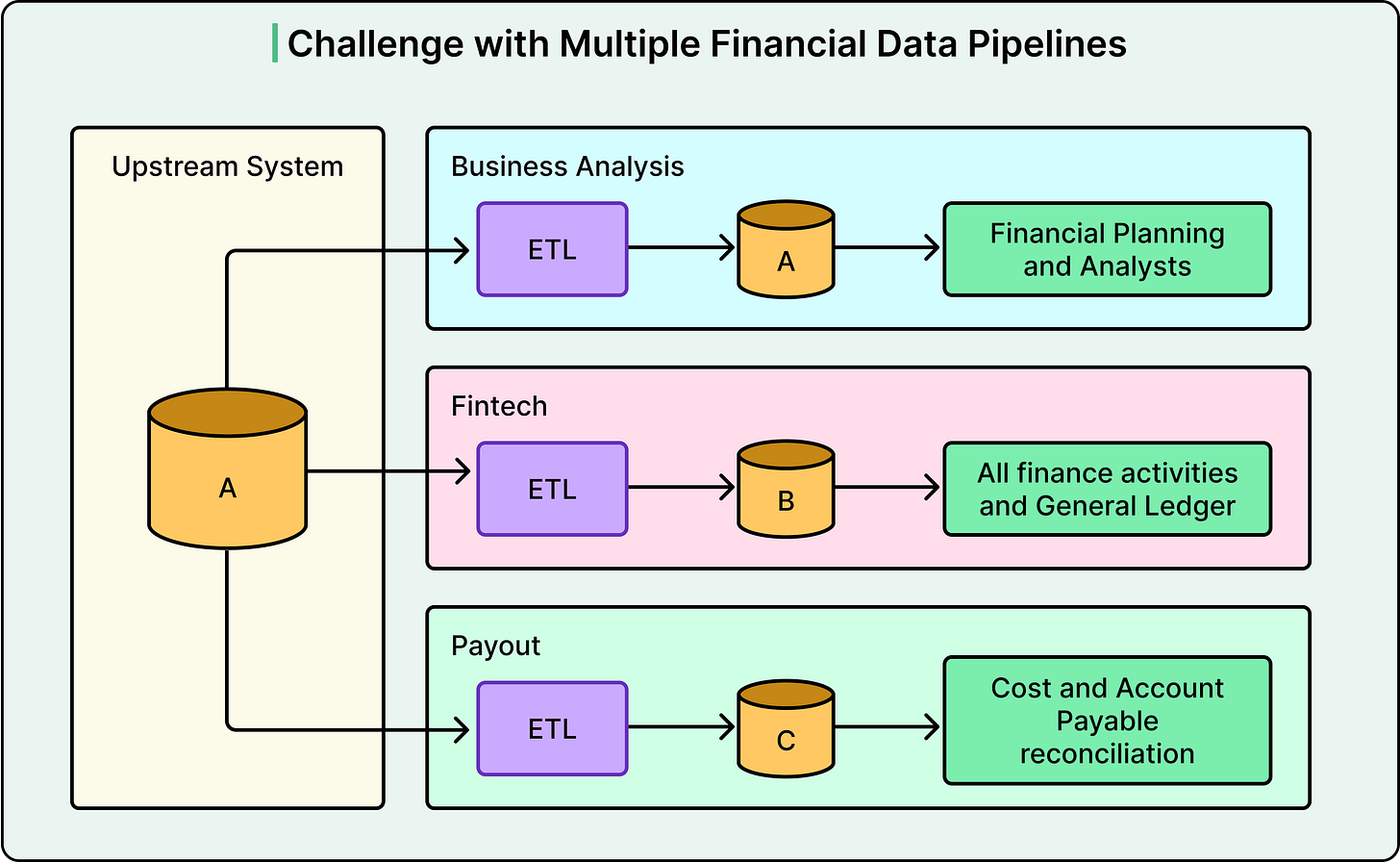

Given the sheer volume of data and the diverse requirements of different teams, the Data Engineering, Business Intelligence, and Data Analysis teams each developed their own data pipelines to meet their specific demands. The appeal of separate data pipeline architectures lies in their simplicity, clear ownership boundaries, and ease of development.

However, Agoda soon discovered that maintaining separate financial data pipelines, each with its own logic and definitions, could introduce discrepancies and inconsistencies that could impact the company’s financial statements. In other words, there is no single source of truth, which is not a good situation for financial data.

In this article, we will look at how the Agoda engineering team built a single source of truth for its financial data and the challenges encountered.

Disclaimer: This post is based on publicly shared details from the Agoda Engineering Team. Please comment if you notice any inaccuracies.

The Problems of Multiple Financial Data Pipelines

A data pipeline is an automated system that extracts data from source systems, transforms it according to business rules, and loads it into databases where analysts can use it.

The high-level architecture of multiple data pipelines, each owned by different teams, introduced several fundamental problems that affected both data quality and operational efficiency.

First, duplicate sources became a significant issue. Many pipelines pulled data from the same upstream systems independently. This led to redundant processing, synchronization issues, data mismatches, and increased maintenance overhead. When three different teams all read from the same booking database but at slightly different times or with different queries, this wastes computational resources and can lead to scenarios where teams are looking at slightly different versions of the same underlying data.

Second, inconsistent definitions and transformations created confusion across the organization. Each team applied its own logic and assumptions to the same data sources. As a result, financial metrics could differ depending on which pipeline produced them. For instance, the Finance team might calculate revenue differently from the Analytics team, leading to conflicting reports when executives ask fundamental questions about business performance. This undermined data reliability and created a lack of trust in the numbers.

Third, the lack of centralized monitoring and quality control resulted in inconsistent practices across teams. The absence of a unified system for tracking pipeline health and enforcing data standards meant that each team implemented its own quality checks. This resulted in duplicated effort, inconsistent practices, and delays in identifying and resolving issues. When problems did occur, it was difficult to determine which pipeline was the source of the issue.

See the diagram below:

During a recent review, Agoda observed that differences in data handling and transformation across these pipelines led to inconsistencies in reporting, as well as operational delays.

Unblocked: Context that saves you time and tokens (Sponsored)

AI coding tools are fast, capable, and completely context-blind. Even with rules, skills, and MCP connections, they generate code that misses your conventions, ignores past decisions, and breaks patterns. You end up paying for that gap in rework and tokens.

Unblocked changes the economics.

It builds organizational context from your code, PR history, conversations, docs, and runtime signals. It maps relationships across systems, reconciles conflicting information, respects permissions, and surfaces what matters for the task at hand. Instead of guessing, agents operate with the same understanding as experienced engineers.

You can:

Generate plans, code, and reviews that reflect how your system actually works

Reduce costly retrieval loops and tool calls by providing better context up front

Spend less time correcting outputs for code that should have been right in the first place

The Solution: Financial Unified Data Pipeline

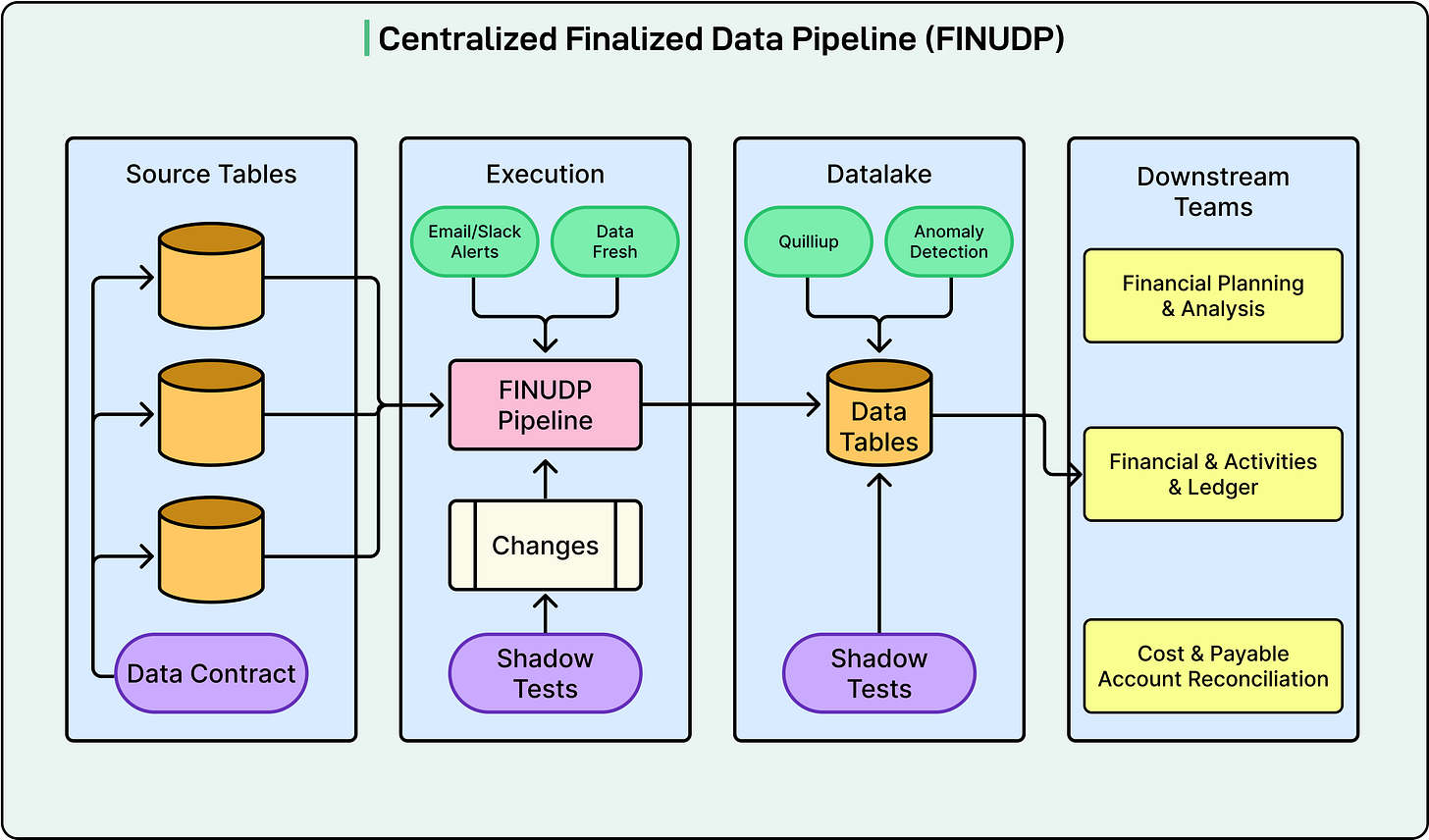

To overcome these challenges, Agoda developed a centralized financial data pipeline known as FINUDP, which stands for Financial Unified Data Pipeline.

This system delivers both high data availability and data quality. Built on Apache Spark, FINUDP processes all financial data from millions of bookings each day. It makes this data reliably available to downstream teams for reconciliation, ledger, and financial activities.

The architecture consists of several key components.

Source tables contain raw data from upstream systems such as bookings and payments.

The execution layer is where the actual data processing happens, running on Apache Spark for distributed computing power. This layer sends email and Slack alerts when jobs fail and uses an internally developed tool called GoFresh to monitor if data is updated on schedule.

The data lake is where processed data is stored, with validation mechanisms ensuring data quality.

Finally, downstream teams (including Finance, Planning, and Ledger teams) consume the data for their specific needs.

See the diagram below:

For this centralized pipeline, three non-functional requirements stood out.

Data freshness: The pipeline is designed to update data every hour, aligned with strict service level agreements for downstream consumers. To ensure these targets are never missed, Agoda uses GoFresh, which monitors table update timestamps. If an update is delayed, the system flags it, prompting immediate action to prevent any breaches of these agreements.

Reliability: Data quality checks run automatically as soon as new data lands in a table. A suite of automated validation checks investigates each column against predefined rules. Any violation triggers an alert, stopping the pipeline and allowing the team to address data issues before they cascade downstream.

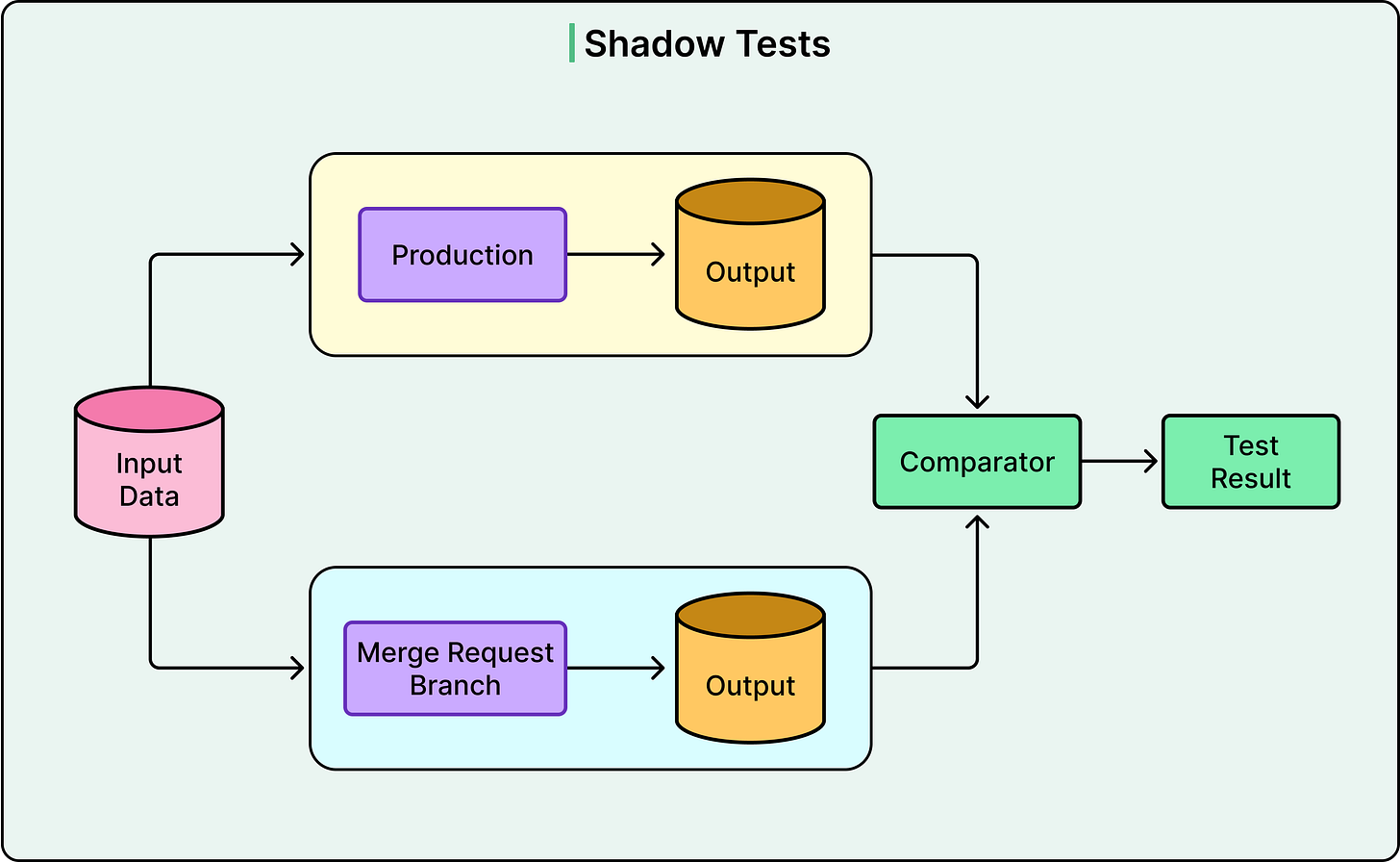

Maintainability: Each change begins with a strong, peer-reviewed design. Code reviews are mandatory, and all changes undergo shadow testing, which runs on real data in a non-production environment for comparison.

Technical Practices for Quality Assurance

Agoda implemented several technical practices to ensure the reliability of FINUDP. Understanding these practices provides insight into how production-grade data systems are built and maintained.

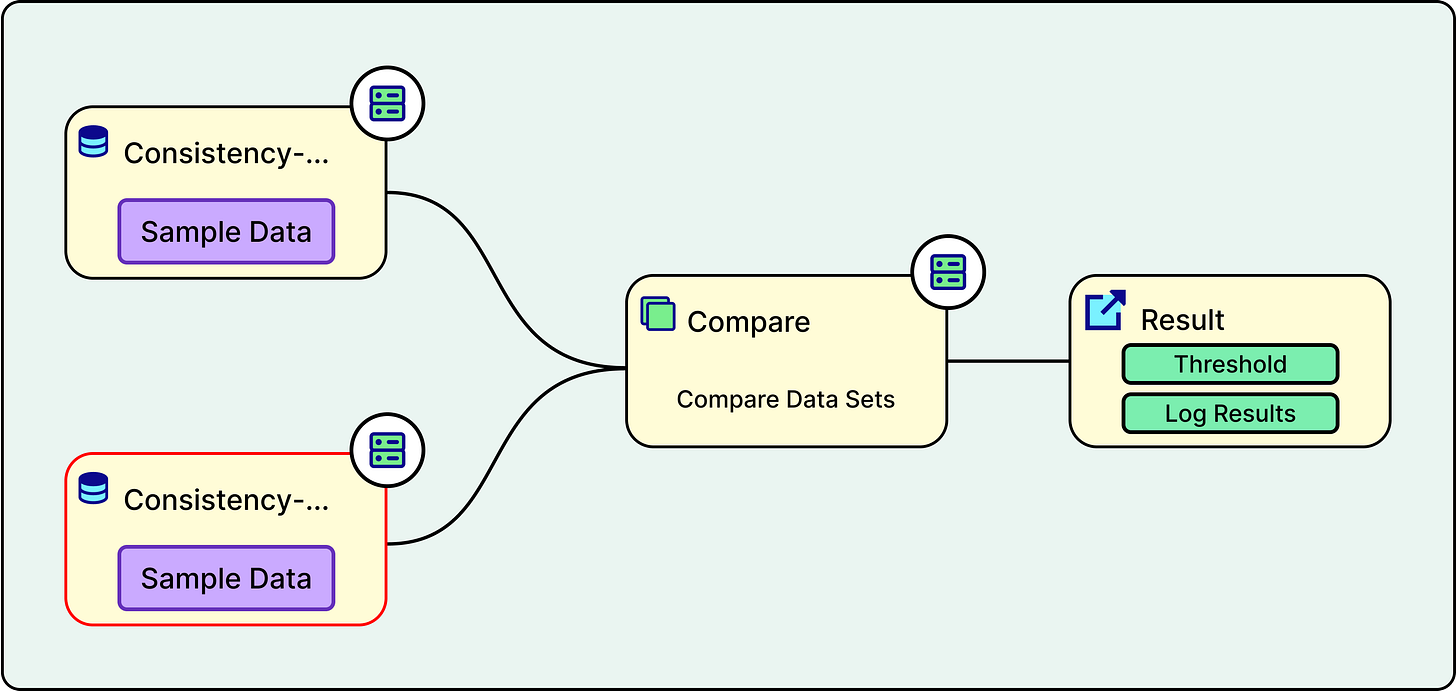

Shadow testing is one of the most important practices. When a developer makes a change to the pipeline code, the system runs both the old version and the new version on the same production data in a test environment. The outputs from both versions are then compared, and a summary of the differences is shared directly within the code review process. This provides reviewers with clear visibility into the impact of proposed changes on the data. It is an excellent way to catch unexpected side effects before they reach production.

See the diagram below:

The staging environment serves as a safety net between development and production. It closely mirrors the production setup, allowing Agoda to test new features, pipeline logic, schema changes, and data transformations in a controlled setting before releasing them to all users. By running the full pipeline with realistic data in staging, the team can identify and resolve issues such as data quality problems, integration errors, or performance bottlenecks without risking the integrity of production data. This approach reduces the likelihood of unexpected failures and builds confidence that every change has been thoroughly validated before going live.

Proactive monitoring for data reliability includes several mechanisms.

Daily snapshots are taken to preserve historical states of the data.

Partition count checks ensure that all expected data partitions are present. A partition is a way of dividing large tables into smaller chunks, typically by date, so queries only need to scan relevant portions of the data.

Automated alerts detect spikes in corrupted data, ensuring that row counts align with expectations.

The multi-level alerting system ensures that failures are caught quickly and the right people are notified:

Email alerts are the first level, where any pipeline failure triggers an email to developers via the coordinator, enabling quick awareness and response.

Slack alerts form the second level, where a dedicated backend service monitors all running jobs and sends notifications about successes or failures directly to the development team’s Slack channels, categorized by hourly and daily frequency.

The third level involves GoFresh continuously checking the freshness of specific columns in target tables. If data is not updated within a predetermined timeframe, GoFresh notifies the Network Operations Center, a 24/7 support team that monitors all critical Agoda alerts, notifies the correct team, and coordinates emergency response sessions.

Data integrity is verified using a third-party data quality tool called Quilliup. Agoda executes predefined test cases that utilize SQL queries to compare data in target tables with their respective source tables. Quilliup measures the variation between source and target data and alerts the team if the difference exceeds a set threshold. This ensures consistency between the original data and its downstream representation.

Data contracts establish formal agreements with upstream teams that provide source data. These contracts define required data rules and structure. If incoming source data violates the contract, the source team is immediately alerted and asked to resolve the issue. There are two types of data contracts.

Detection contracts monitor real production data and alert when violations occur.

Preventative contracts are integrated into the continuous integration pipelines of upstream data producers, catching issues before data is even published.

Lastly, anomaly detection utilizes machine learning models to monitor data patterns and identify unusual fluctuations or spikes in the data. When anomalies are detected, the team investigates the root cause and provides feedback to improve model accuracy, distinguishing between valid alerts and false positives.

Key Challenges Encountered

Throughout the journey of building FINUDP and migrating multiple data pipelines into one, Agoda encountered several key challenges:

Stakeholder management proved to be more complex than anticipated. Centralizing multiple data pipelines into a single platform meant that each output still served its own set of downstream consumers. This created a broad and diverse stakeholder landscape across product, finance, and engineering teams.

Performance issues emerged as a significant technical challenge. Bringing together several large pipelines and consolidating high-volume datasets into FINUDP initially incurred a performance cost. The end-to-end runtime was approximately five hours, which was far from ideal for daily financial reporting. Through a series of optimization cycles covering query tuning, partitioning strategy, pipeline orchestration, and infrastructure adjustments, Agoda was able to reduce the runtime from five hours to approximately 30 minutes.

Data inconsistency was perhaps the most conceptually challenging problem. Each legacy pipeline had evolved with its own set of assumptions, business rules, and data definitions. When these were merged into FINUDP, those differences surfaced as data inconsistencies and mismatches. To address this, Agoda went back to first principles. The team documented the intended meaning of each key metric and field, conducted detailed gap analyses comparing the outputs of different pipelines, and facilitated workshops with stakeholders to agree on a single definition for each dataset.

Architectural Trade-offs

Centralizing data pipelines came with clear benefits but also required navigating key trade-offs between competing priorities:

The most significant trade-off involved velocity. In the previous decentralized approach, independent small datasets could move through their separate pipelines without waiting on each other. With the centralized setup, Agoda established data dependencies to ensure that all upstream datasets are ready before proceeding with the entire data pipeline.

Data quality requirements also affected development speed. Since the unified pipeline includes multiple data sources and components, changes to any component require testing and a data quality check of the whole pipeline. This causes a slowness in velocity, but it is a trade-off accepted in exchange for the numerous benefits of having a unified pipeline, including consistency, reliability, and a single source of truth.

Data governance became more rigorous and time-consuming. Migrating to a single pipeline required that every transformation be meticulously documented and reviewed. Since financial data is at stake, full alignment and approval from all stakeholders are needed before changing upstream processes. The need for thorough vetting and consensus slowed implementation, but ultimately built a foundation for trust and integrity in the new unified system. In essence, centralization increases reliability and transparency, but it demands tighter coordination and careful change management at every step.

Conclusion

Consolidating financial data pipelines at Agoda has made a real difference in how the company handles and trusts its financial metrics.

Through FINUDP, Agoda has established a single source of truth for all financial metrics. By introducing centralized monitoring, automated testing, and robust data quality checks, the team has significantly improved both the reliability and availability of data. This setup means downstream teams always have access to accurate and consistent information.

Last year, the data pipeline achieved 95.6% uptime (with a goal to reach 99.5% data availability). Maintaining such high data standards is always a work in progress, but with these systems in place, Agoda is better equipped to catch issues early and collaborate across teams.

References:

Great insights into Agoda's data pipeline consolidation journey! While building a unified data pipeline (FINUDP) is a crucial first step toward data consistency, the real challenge often lies in what happens after the data lands in the centralized system.

In my experience, the harder problem is maintaining consistency between operational business data and financial data after applying various accounting treatments—such as cost allocations, accruals, prepayments, and revenue recognition adjustments. These transformations are where discrepancies often emerge between:

1. Operational reality (what actually happened in the business)

2. Financial representation (how it's recorded for accounting purposes)

Furthermore, the gap between external financial reporting (GAAP/IFRS-compliant statements for investors/regulators) and internal management reporting (business unit P&Ls used for decision-making) creates another layer of complexity. These two views often diverge significantly due to different:

1. Recognition timing (accrual vs. cash basis segments)

2. Allocation methodologies (corporate overhead treatment)

3. Segment definitions (legal entities vs. business lines)

A truly robust "single source of truth" needs to explicitly model these transformation layers with clear lineage, enabling reconciliation between:

1. Raw operational metrics → Adjusted financial data → External reporting

2. Raw operational metrics → Adjusted financial data → Internal management reports

Without this multi-layer consistency framework, teams will still face "multiple versions of the truth"—just now centralized in one pipeline rather than scattered across many.

This is super helpful, especially since I’ve been working with more and more financial data in the enterprise.

Definitely helpful to see how others have approached this consistent and growing problem. Thanks!