The Algorithm That Powers Your X (Twitter) Post

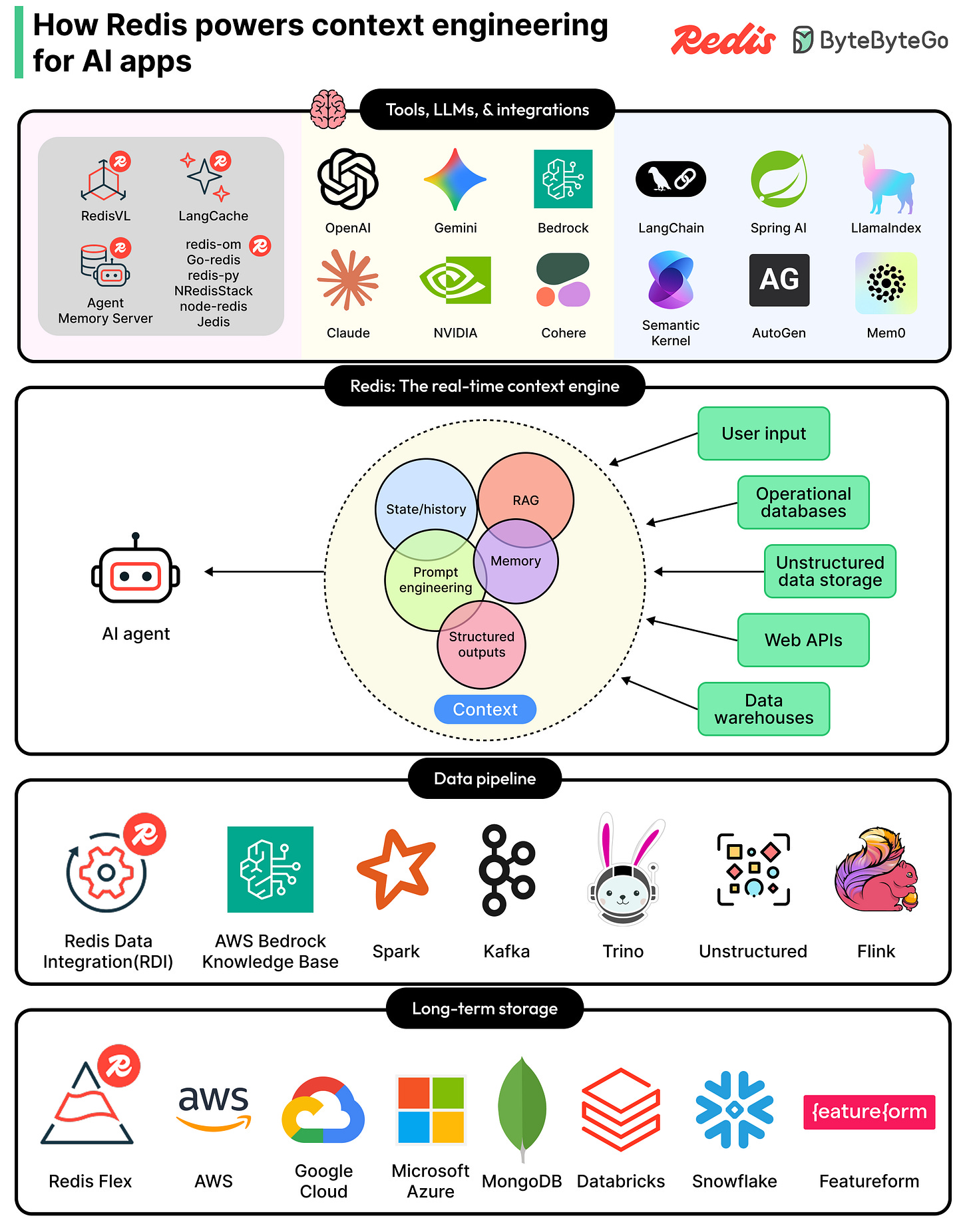

The right data. The right time.(Sponsored)

Context engineering is the new critical layer in every production AI app, and Redis is the real-time context engine powering it. Redis gathers, syncs, and serves the right mix of memory, knowledge, tools, and state for each model call, all from one unified platform. Search across RAG, short- and long-term memory, and structured and unstructured data without stitching together a fragile multi-tool stack. With 30+ agent framework integrations across OpenAI, LangChain, Bedrock, NVIDIA NIM, and more, Redis fits the stack your teams are already building on. Accurate, reliable AI apps that scale. Built on one platform.

Every time we open X (formerly Twitter) and scroll through the “For You” tab, a recommendation system is deciding which posts to show and in what order. This recommendation system works in real-time.

In the world of social media, this is a big deal because any latency issues can cause user dissatisfaction.

Until now, the internal workings of this recommendation system were more or less a mystery. However, recently, the xAI engineering team open-sourced the algorithm that powers this feed, publishing it on GitHub under an Apache-2.0 license. It reveals a system built on a Grok-based transformer model that has replaced nearly all hand-crafted rules with machine learning.

In this article, we will look at what the algorithm does, how its components fit together, and why the xAI Engineering Team made the design choices they did.

Disclaimer: This post is based on publicly shared details from the xAI Engineering Team. Please comment if you notice any inaccuracies.

The Big Picture

When you request the For You feed in X, the algorithm draws from two separate sources of content:

The first source is called in-network content. These are posts from accounts you already follow. If you follow 200 people, the system looks at what those 200 people have posted recently and considers them as candidates for your feed.

The second source is called out-of-network content. These are posts from accounts you do not follow. The algorithm discovers them by searching across a global pool of posts using a machine learning technique called similarity search. The idea behind this is that if your past behavior suggests you would find a post interesting, that post becomes a candidate even if you have never heard of the author.

Both sets of candidates are then merged into a single list, scored, filtered, and ranked. The top-ranked posts are what you see when you open the app.

The Four Core Components

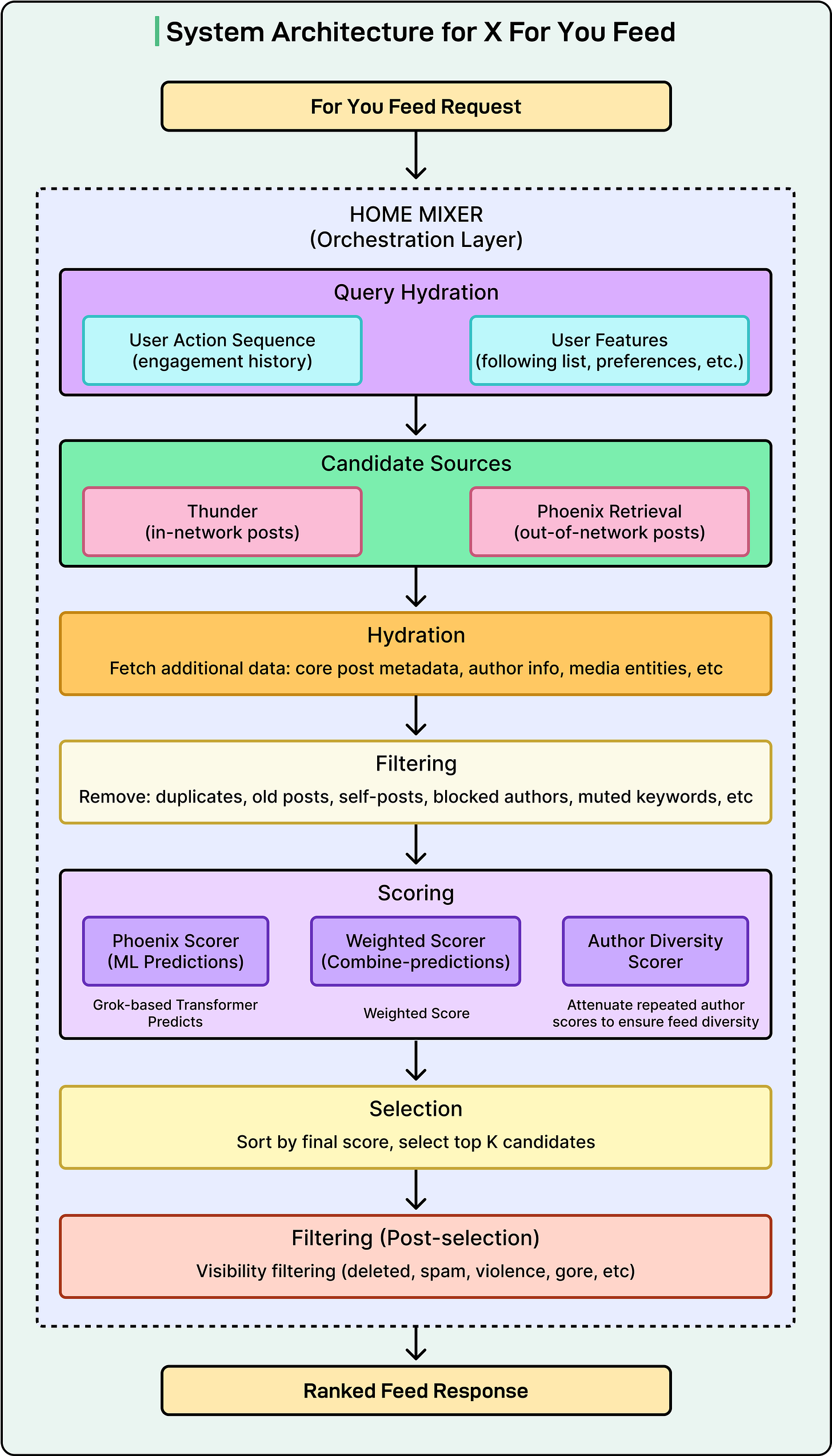

The diagram below shows the overall architecture of the system built by the xAI engineering team:

The codebase is organized into four main directories, each representing a distinct part of the system. The entire codebase is written in Rust (62.9%) and Python (37.1%).

Home Mixer

Home Mixer is the orchestration layer. It acts as the coordinator that calls the other components in the right order and assembles the final feed. It is not doing the heavy ML work itself, but just managing the pipeline.

When a request comes in, Home Mixer kicks off several stages in sequence:

Fetching user context

Retrieving candidate posts

Enriching those posts with metadata

Filtering out the ineligible ones, scoring the survivors

Selecting the top results and running final checks.

The server exposes a gRPC endpoint called ScoredPostsService that returns the ranked list of posts for a given user.

Thunder

Thunder is an in-memory post store and real-time ingestion pipeline. It consumes post creation and deletion events from Kafka and maintains per-user stores for original posts, replies, reposts, and video posts.

When the algorithm needs in-network candidates, it queries Thunder, which can return results in sub-millisecond time because everything lives in memory rather than in an external database. Thunder also automatically removes posts that are older than a configured retention period, keeping the data set fresh.

Phoenix

Phoenix is the ML brain of the system. It has two jobs:

Job 1: Retrieval

Phoenix uses a two-tower model to find out-of-network posts:

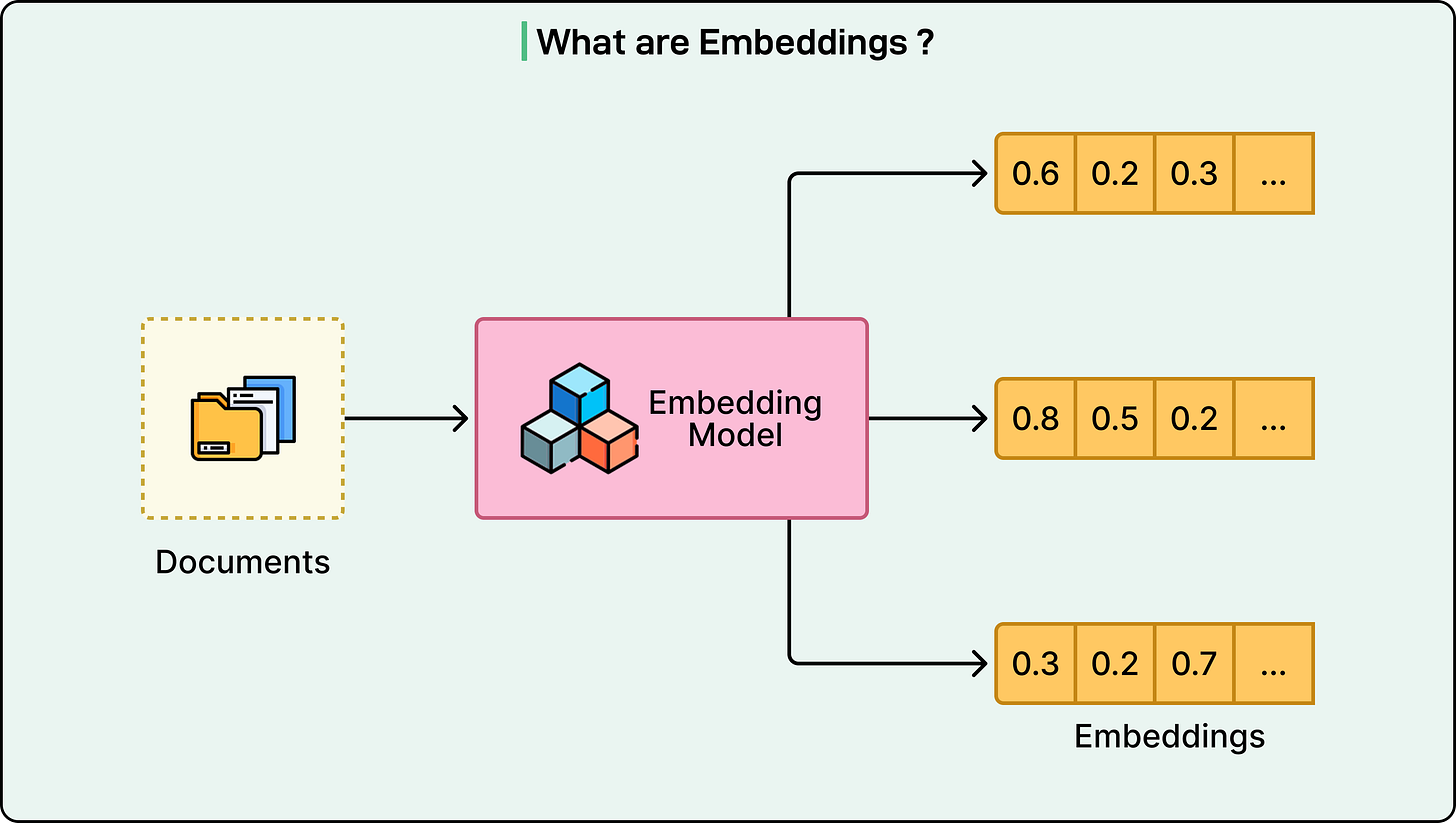

One tower (the User Tower) takes your features and engagement history and encodes them into a mathematical representation called an embedding.

The other tower (the Candidate Tower) encodes every post into its own embedding.

Finding relevant posts then becomes a similarity search. The system computes a dot product between your user embedding and each candidate embedding and retrieves the top-K most similar posts. If you are unfamiliar with dot products, the core idea is that two embeddings that “point in the same direction” in a high-dimensional space produce a high score, meaning the post is likely relevant to you.

See the diagram below that shows the concept of embeddings:

Job 2: Ranking

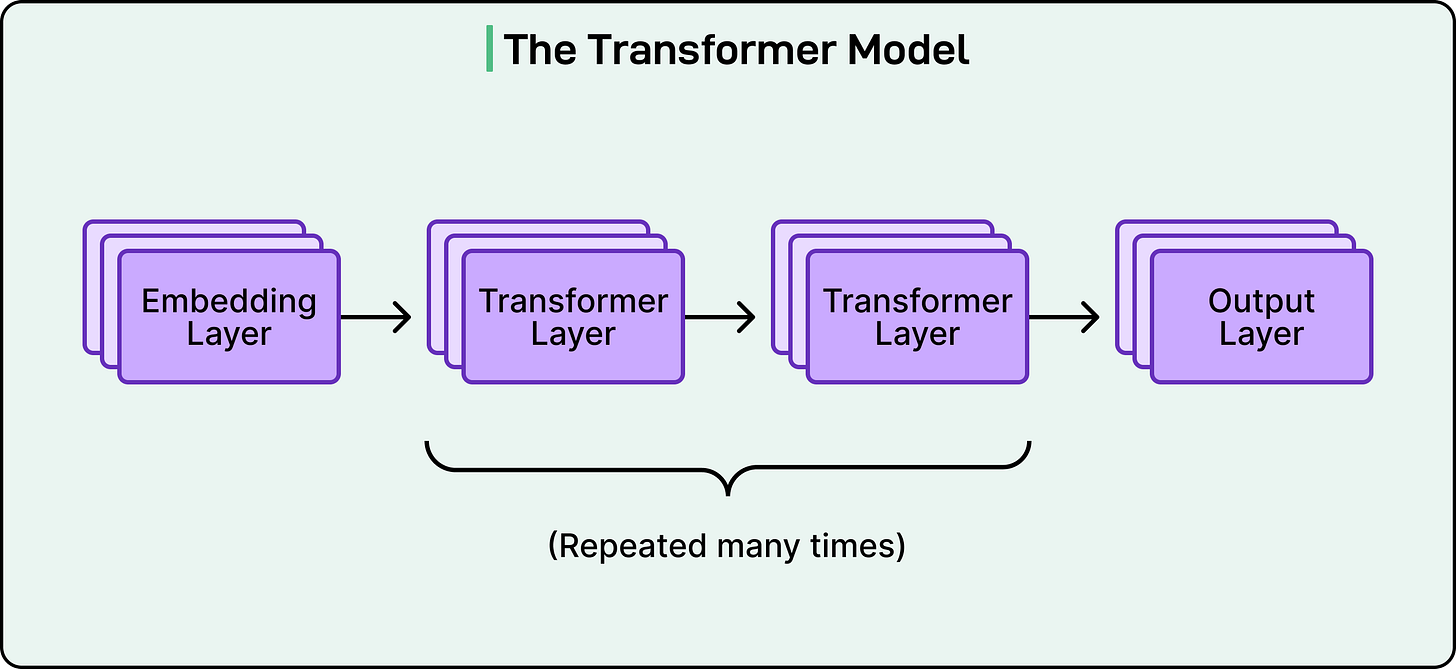

Once candidates have been retrieved from both Thunder and Phoenix’s retrieval step, Phoenix runs a Grok-based transformer model to predict how likely you are to engage with each post.

See the diagram below that shows the concept of a transformer model:

The transformer implementation is ported from the Grok-1 open source release by xAI, adapted for recommendation use cases. It takes your engagement history and a batch of candidate posts as input and outputs a probability for each type of engagement action.

Candidate Pipeline

The Candidate Pipeline is a reusable framework that defines the structure of the whole recommendation process.

It provides traits (interfaces, in Rust terminology) for each stage of the pipeline:

Source (fetch candidates)

Hydrator (enrich candidates with extra data)

Filter (remove ineligible candidates)

Scorer (compute scores)

Selector (sort and pick the top candidates)

SideEffect (run asynchronous tasks like caching and logging).

The framework runs independent stages in parallel where possible and includes configurable error handling. This modular design makes it straightforward for the xAI Engineering Team to add new data sources or scoring models without rewriting the pipeline logic.

The Pipeline Step by Step

Here is the full sequence that runs every time you open the For You feed:

Query Hydration: The system fetches your recent engagement history (what you liked, replied to, and reposted) and your metadata, such as your following list.

Candidate Sourcing: Thunder provides recent posts from accounts you follow. Phoenix Retrieval provides ML-discovered posts from the global corpus.

Candidate Hydration: Each candidate post is enriched with additional information: its text and media content, the author’s username and verification status, video duration if applicable, and subscription status.

Pre-Scoring Filters: Before any scoring happens, the system removes posts that are duplicates, too old, authored by you, from accounts you have blocked or muted, containing keywords you have muted, posts you have already seen, or ineligible subscription content.

Scoring: The remaining candidates pass through multiple scorers in sequence. First, the Phoenix Scorer gets ML predictions from the transformer. Then, the Weighted Scorer combines those predictions into a single relevance score. Next, an Author Diversity Scorer reduces the score of posts from repeated authors so your feed is not dominated by one person. Finally, an OON (out-of-network) Scorer adjusts scores for posts from accounts you do not follow.

Selection: Posts are sorted by their final score, and the top K are selected.

Post-Selection Filters: A final round of checks removes posts that have been deleted, flagged as spam, or identified as containing violent or graphic content. A conversation deduplication filter also ensures you do not see multiple branches of the same reply thread.

How Scoring Works

The Phoenix transformer predicts probabilities for a wide range of user actions: liking, replying, reposting, quoting, clicking, visiting the author’s profile, watching a video, expanding a photo, sharing, dwelling (spending time reading), following the author, marking “not interested,” blocking the author, muting the author, and reporting the post.

Each of these predicted probabilities is multiplied by a weight and then summed to produce a final score. Positive actions like liking, reposting, and sharing carry positive weights. Negative actions like blocking, muting, and reporting carry negative weights. This means that if the model predicts you are likely to block the author of a post, that post’s score gets pushed down significantly. The formula is simple:

Final Score = sum of (weight for action * predicted probability of that action)

This multi-action prediction approach is more nuanced than a single “relevance” score because it lets the system distinguish between content you would enjoy and content you would find annoying or harmful.

Conclusion

There are five architectural choices worth understanding from xAI’s recommendation system:

Instead of humans deciding which signals matter (post length, hashtag count, time of day), the Grok-based transformer learns what matters directly from user engagement sequences. This simplifies the data pipelines and serving infrastructure.

When the transformer scores a batch of candidate posts, each post can only “attend to” (or look at) the user’s context. It cannot attend to the other candidates in the same batch. This design choice ensures that a post’s score does not change depending on which other posts happen to be in the same batch. It makes scores consistent and cacheable, which is important at the scale X operates at.

Both the retrieval and ranking stages use multiple hash functions for embedding lookup.

Rather than collapsing everything into a single relevance number, the model predicts probabilities for many distinct actions. This gives the Weighted Scorer fine-grained control over what the feed optimizes for.

The Candidate Pipeline framework separates the pipeline’s execution logic from the business logic of individual stages. This makes it easy to add a new data source, swap in a different scoring model, or insert a new filter without touching the rest of the system.

References:

this is a great article that helped make sense of it for me. thank u ! i thoroughly enjoyed it even tho im not a software engineer.