The Security Architecture of GitHub Agentic Workflow

npx workos: From Auth Integration to Environment Management, Zero ClickOps (Sponsored)

npx workos@latest launches an AI agent, powered by Claude, that reads your project, detects your framework, and writes a complete auth integration into your codebase. No signup required. It creates an environment, populates your keys, and you claim your account later when you're ready.

But the CLI goes way beyond installation. WorkOS Skills make your coding agent a WorkOS expert. workos seed defines your environment as code. workos doctor finds and fixes misconfigurations. And once you're authenticated, your agent can manage users, orgs, and environments directly from the terminal. No more ClickOps.

GitHub built an AI agent that can fix documentation, write tests, and refactor code while you sleep. Then they designed their entire security architecture around the assumption that this agent might try to steal your API keys, spam your repository with garbage, and leak your secrets to the internet.

This can be considered paranoia, but it’s the only responsible way to put a non-deterministic system inside your CI/CD pipeline.

GitHub Agentic Workflows let you plug AI agents into GitHub Actions so they can triage issues, generate pull requests, and handle routine maintenance without human supervision. The appeal is clear, but so is the risk. These agents consume untrusted inputs, make decisions at runtime, and can be manipulated through prompt injection, where carefully crafted text tricks the agent into doing things it wasn’t supposed to do.

In this article, we will look at how GitHub built a security architecture that assumes the agent is already compromised. However, to understand their solution, you first need to understand why the problem is harder than it looks.

Disclaimer: This post is based on publicly shared details from the GitHub Engineering Team. Please comment if you notice any inaccuracies.

Why Agents Break the CI/CD Contract

CI/CD pipelines are built on a simple assumption. The developers define the steps, the system runs them, and every execution is predictable. All the components in a pipeline share a single trust domain, meaning they can all see the same secrets, access the same files, and talk to the same network. That shared environment is actually a feature for traditional automation. When every component is a deterministic script, sharing a trust domain makes everything composable and fast.

Agents break that assumption completely because they don’t follow a fixed script. They reason over repository state, consume inputs they weren’t specifically designed for, and make decisions at runtime. A traditional CI step either does exactly what you coded it to do or fails. An agent might do something you never anticipated, especially if it processes an input designed to manipulate it.

GitHub’s threat model for Agentic Workflows is blunt.

They assume the agent will try to read and write state that it shouldn’t, communicate over unintended channels, and abuse legitimate channels to perform unwanted actions. For example, a prompt-injected agent with access to shell commands can read configuration files, SSH keys, and Linux /proc state to discover credentials. It can scan workflow logs for tokens. Once it has those secrets, it can encode them into a public-facing GitHub object like an issue comment or pull request for an attacker to retrieve later. The agent isn’t actively malicious, but following instructions that it couldn’t distinguish between legitimate ones.

In a standard GitHub Actions setup, everything runs in the same trust domain on top of a runner virtual machine. A rogue agent could interfere with MCP servers (the tools that extend what an agent can do), access authentication secrets stored in environment variables, and make network requests to arbitrary hosts. A single compromised component gets access to everything. The problem isn’t that Actions are insecure. It’s that agents change the assumptions that made a shared trust domain safe in the first place.

[Live on May 6] Stop babysitting your agents (Sponsored)

Agents can generate code. Getting it right for your system, team conventions, and past decisions is the hard part. You end up babysitting the agent and watch the token costs climb.

More MCPs, rules, and bigger context windows give agents access to information, but not understanding. The teams pulling ahead have a context engine to give agents only what they need for the task at hand.

Our April webinar filled up, so we are bringing it back! Join us live (FREE) on May 6 to see:

Where teams get stuck on the AI maturity curve and why common fixes fall short

How a context engine solves for quality, efficiency, and cost

Live demo: the same coding task with and without a context engine

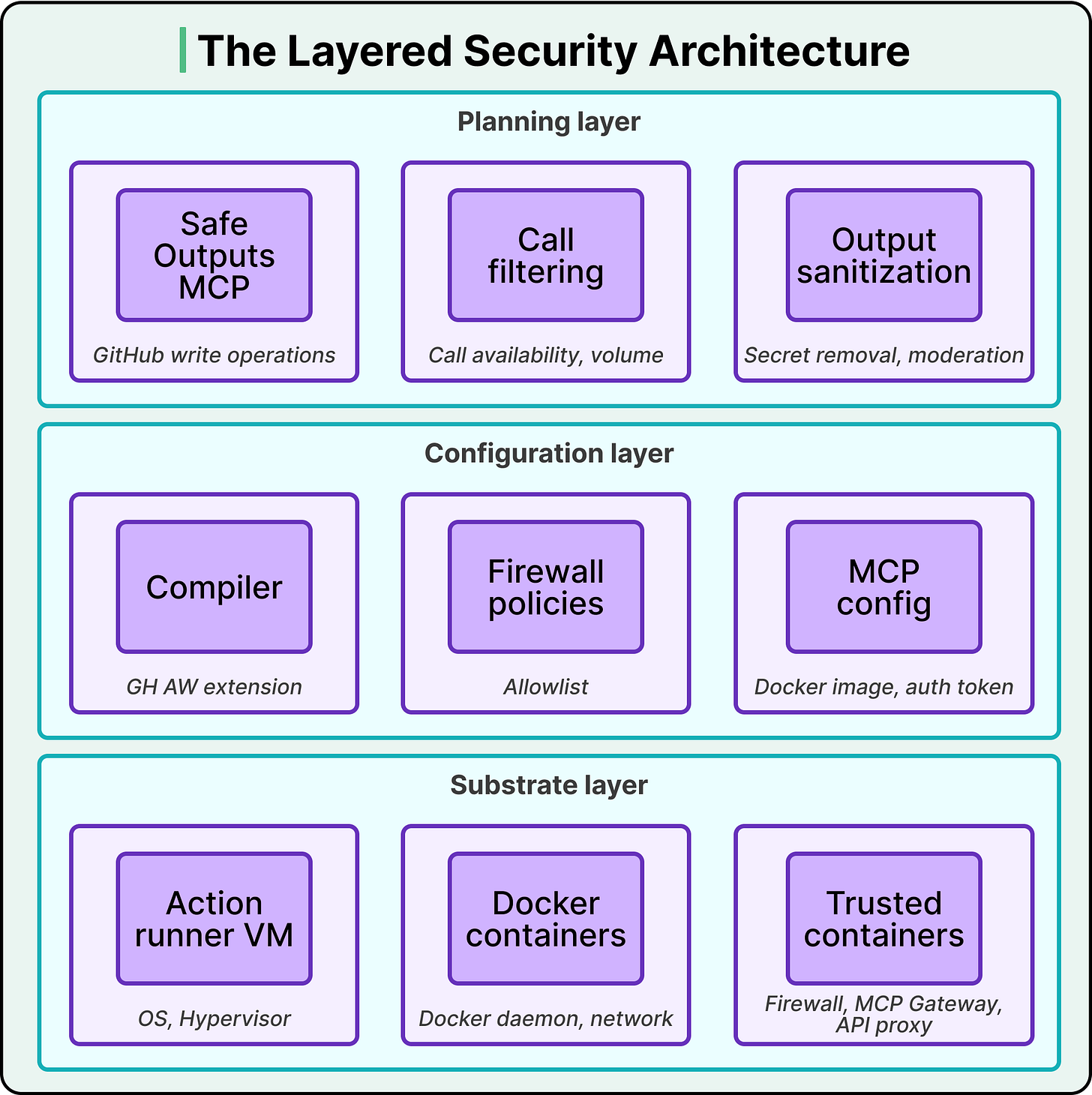

Three Layers of Distrust

GitHub Agentic Workflows use a layered security architecture with three distinct levels.

Each layer limits the impact of failures in the layer above it by enforcing its own security properties independently.

The substrate layer sits at the bottom. It’s built on a GitHub Actions runner VM and several Docker containers, including a set of trusted containers that mediate privileged operations. This layer provides isolation between components, controls system calls, and enforces kernel-level communication boundaries. These protections hold even if an untrusted component is fully compromised and executes arbitrary code within its container. The substrate doesn’t rely on the agent behaving correctly, and even arbitrary code execution inside the agent’s container hits a wall at this level.

The configuration layer sits on top of the substrate layer. This is where the system’s structure gets defined. It includes declarative artifacts and the toolchains that interpret them to set up which components are loaded, how they’re connected, what communication channels are permitted, and what privileges are assigned. The most important piece in this layer is the compiler. GitHub doesn’t just run your workflow definition as-is, but compiles it into a GitHub Action with explicit constraints on permissions, outputs, auditability, and network access. The configuration layer also controls which secrets go into which containers. Externally minted tokens like agent API keys and GitHub access tokens are loaded only into the specific containers that need them, never into the agent’s container.

The planning layer sits on top. While the configuration layer dictates which components exist and how they communicate, the planning layer dictates which components are active over time. Its job is to create staged workflows with explicit data exchanges between stages. The safe outputs subsystem, which we’ll get to shortly, is the most important instance of this. It ensures the agent’s work gets reviewed before it affects anything real.

These layers are independent. If the planning layer fails, the configuration layer still enforces its constraints. If the configuration layer has a bug, the substrate layer still provides isolation. No single failure compromises the whole system.

Not Trusting Agents With Secrets

From the beginning, GitHub wanted workflow agents to have zero access to secrets.

In a standard GitHub Actions setup, sensitive material like agent authentication tokens and MCP server API keys sits in environment variables and configuration files visible to all processes on the runner VM. That’s fine when everything sharing the environment is trusted. It’s dangerous with agents because they’re susceptible to prompt injection. An attacker can hide malicious instructions in a web page, a repository issue, or a pull request comment, and trick the agent into extracting and leaking whatever it can find.

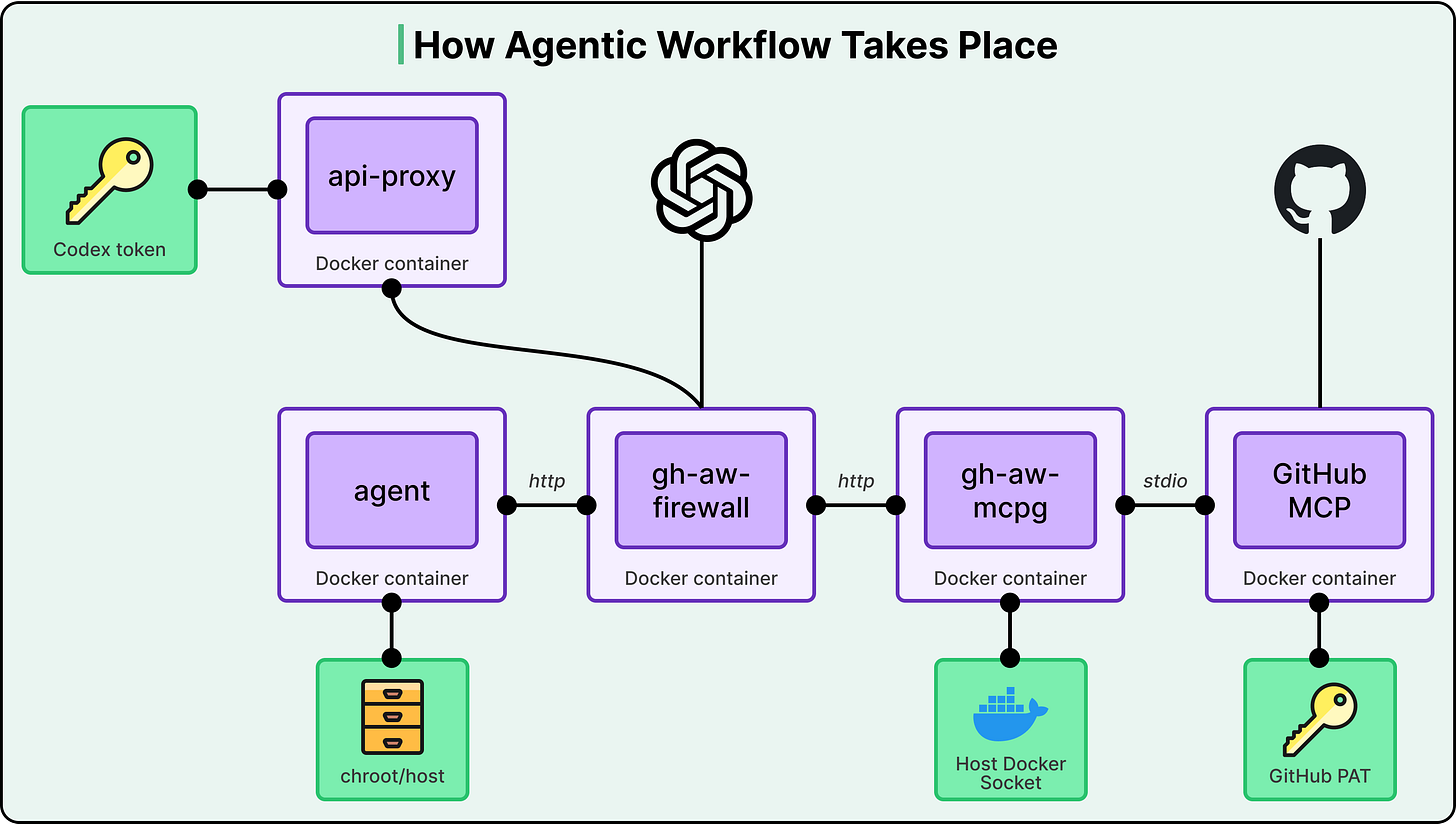

GitHub’s solution is a specific container topology where secrets are physically unreachable from the agent.

The agent runs in its own Docker container on a private network. All internet access goes through a dedicated firewall container (gh-aw-firewall). MCP tools are accessed through a separate trusted container called the MCP gateway (gh-aw-mcpg), which launches MCP servers and holds exclusive access to MCP authentication material like the GitHub Personal Access Token. LLM API calls go through an isolated API proxy that holds the model’s authentication token (like the Codex token). The agent communicates with the firewall over HTTP, the firewall connects to the MCP gateway over HTTP, and the gateway connects to MCP servers over stdio.

See the diagram below:

At no point in this chain does the agent touch a secret. It can make LLM calls because the proxy adds authentication on its behalf. It can use MCP tools because the gateway authenticates on its behalf. But the agent itself is completely secretless.

There’s a real tradeoff here, and GitHub names it explicitly. Coding workloads need broad access to compilers, interpreters, scripts, and repository state. But you can’t just install all those tools inside the agent’s container without expanding the set of network destinations allowed through the firewall and duplicating a lot of the host setup.

Their resolution is elegant, but they mount the entire VM host filesystem read-only at /host inside the agent’s container. Then they overlay sensitive paths (the ones containing secrets and configuration) with empty tmpfs layers, which are temporary in-memory filesystems that effectively hide whatever was at those paths. Finally, they launch the agent in a chroot jail rooted at /host. The result is that the agent can access every compiler, interpreter, and tool on the host machine while being unable to discover any secret material. The host-side setup stays intact. The agent’s writable and discoverable surface is constrained to only what it needs. If you work with containers, this pattern of mounting a host filesystem read-only and masking sensitive paths with tmpfs is applicable well beyond the agentic context.

GitHub isn’t the only one that arrived at this zero-secret principle. OpenAI’s Codex takes a different path to the same destination. In the Codex cloud, secrets are available only during the setup phase and are removed before the agent phase starts, and internet access is disabled by default during execution. GitHub uses proxies and gateways. OpenAI uses a two-phase model. The fact that both teams independently converged on “agents should never touch secrets” validates the principle.

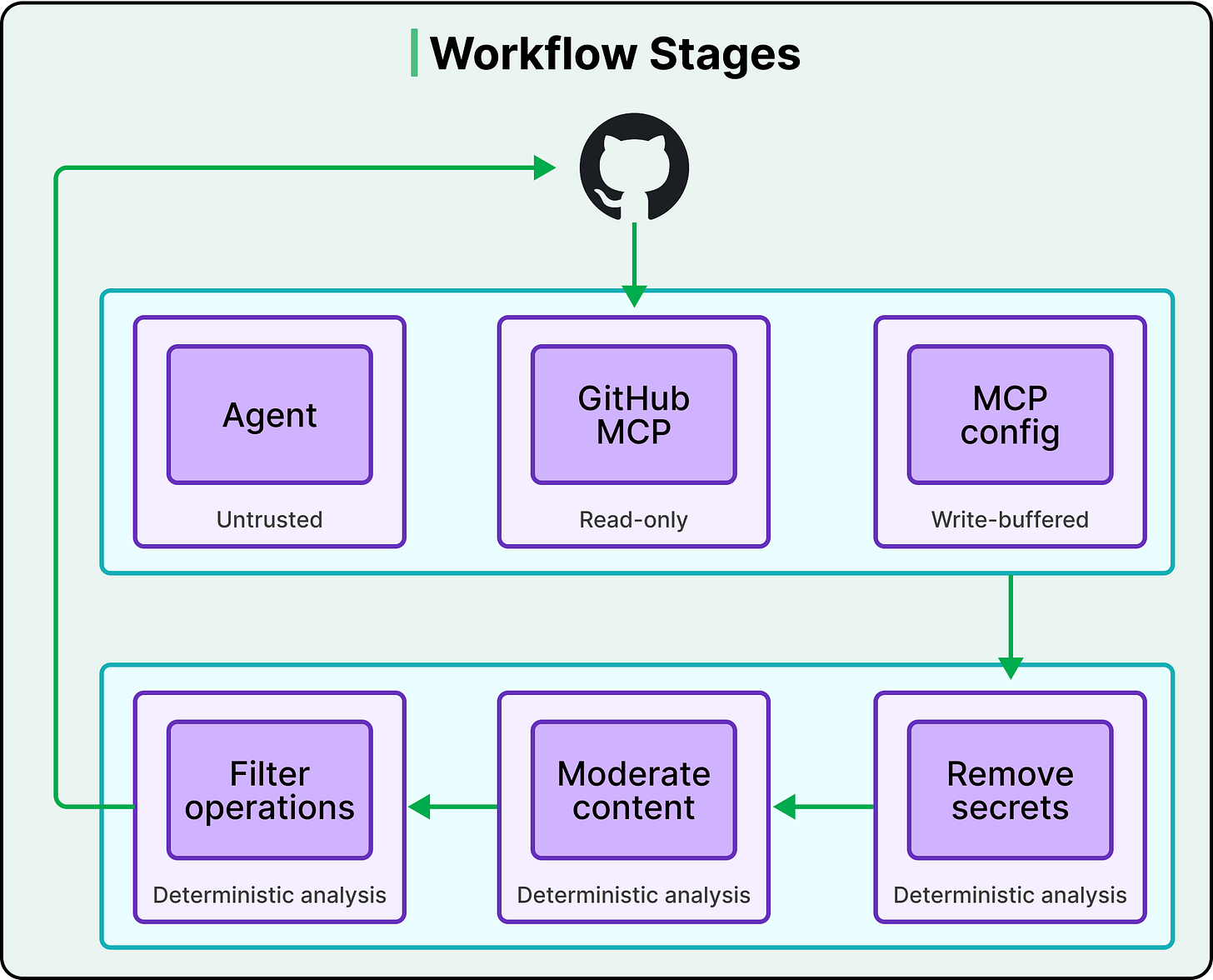

Every Output Gets Vetted

Prompt-injected agents can cause real harm even without access to secrets. A rogue agent could spam a repository with pointless issues and pull requests to overwhelm maintainers, or inject objectionable URLs and content into repository objects. The absence of secrets doesn’t mean the absence of risk.

GitHub’s answer is the safe outputs system, and it’s the most distinctive part of their architecture.

Here’s how it works:

The agent can read GitHub state through the GitHub MCP server, which operates in read-only mode. But it cannot write to GitHub directly. All write operations go through a separate safe output MCP server, which only buffers the agent’s intended changes without executing them.

Once the agent finishes its work and exits, everything it staged goes through a deterministic analysis pipeline.

This pipeline does three things.

First, it checks the type of each operation against an allowlist that the workflow author defined. If the author said the agent can create issues and comments but not pull requests, any staged PR gets dropped.

Second, it enforces quantity limits. An agent might be restricted to creating at most three pull requests per run.

Third, it runs content sanitization, scanning for secrets that might have leaked into the output text, removing URLs, and running content moderation checks.

Only outputs that survive the entire pipeline get committed to GitHub. Every stage’s side effects are explicit and vetted.

The compiler plays an important role here, too. When it decomposes a workflow into stages, it defines for each stage the active components and their permissions (read versus write), the data artifacts that stage can emit, and the admissible downstream consumers of those artifacts.

The workflow author declares upfront what the agent is allowed to produce, and the system enforces those declarations deterministically. Since the pipeline uses deterministic analysis, it can only catch patterns that GitHub anticipated. A truly novel attack vector might slip through, which is exactly why the other layers exist. No single layer is the complete answer.

The Logging Strategy

Agents are determined to accomplish their tasks by any means and can have a surprisingly deep toolbox of tricks for doing so. When an agent behaves unexpectedly, you need full visibility into what happened.

Agentic Workflows make observability a first-class architectural property by logging at every trust boundary.

Network and destination-level activity gets recorded at the firewall.

Model request/response metadata and authenticated requests are captured by the API proxy.

Tool invocations are logged by the MCP gateway and MCP servers.

GitHub even adds internal instrumentation to the agent container to audit potentially sensitive actions like environment variable accesses.

Together, these logs support full forensic reconstruction, policy validation, and detection of anomalous behavior.

But there’s a more important long-term play here. Every point where you can observe communication is also a point where you can mediate it. GitHub is building the observation infrastructure now with future control in mind. They already support a lockdown mode for the GitHub MCP server, and they plan to introduce controls that enforce policies across MCP servers based on whether repository objects are public or private, and based on who authored them.

The Trade-Offs

Every security decision GitHub made came with a cost.

Security versus utility is the most obvious tension. Agents running inside GitHub’s architecture are more constrained than a developer working locally. The chroot approach gives agents access to host tools, but the firewall still limits network access, and the safe outputs pipeline still restricts what the agent can produce. In other words, more security means less flexibility.

Strict-by-default is a strong opinion. Most other coding agents make sandboxing opt-in. Claude Code and Gemini CLI both require you to turn on their sandbox features. GitHub Agentic Workflows run in strict security mode by default. That’s a deliberate choice to prioritize safety over developer convenience, and it won’t be the right tradeoff for every use case.

Prompt injection remains fundamentally unsolved. GitHub’s architecture is a damage containment strategy, not a prevention strategy. It limits the blast radius when an agent gets tricked, but it can’t prevent the issue itself. And the deterministic vetting in the safe outputs pipeline can only catch patterns that were anticipated. A novel attack vector might need a new pipeline stage.

The architecture is also complex, involving multiple containers, proxies, gateways, a compilation step, and a staged output pipeline. This is engineering overhead that makes sense at GitHub’s scale. For simpler use cases, we might not need every piece.

Conclusion

As AI agents become standard in development tooling, the question will shift from whether to sandbox to building a complete security architecture.

GitHub’s four principles offer a transferable framework:

Defend in depth with independent layers.

Keep agents away from secrets by architecture, not policy.

Vet every output through deterministic analysis before it affects the real world.

Log everything at every trust boundary, because today’s observability is tomorrow’s control plane.

References:

“Agents break that assumption completely because they don’t follow a fixed script. They reason over repository state, consume inputs they weren’t specifically designed for, and make decisions at runtime. “

totally nailed it with this imo. fantastic point. it’s a real change that people are being quite slow to adapt to.