Top AI GitHub Repositories in 2026

Give any AI agent access to Google search with SerpApi (Sponsored)

Agents are smarter when they can search the web - but with SerpApi, you don’t need to reinvent the wheel. SerpApi gives your AI applications clean, structured web data from major search engines and marketplaces, so your agents can research, verify, and answer with confidence.

Access real-time data with a simple API.

The AI open-source ecosystem has entered an extraordinary growth phase.

GitHub’s Octoverse 2025 report revealed that over 4.3 million AI-related repositories now exist on the platform, a 178% year-over-year jump in LLM-focused projects alone. In this environment, a select group of repositories has emerged as clear frontrunners, each amassing tens or even hundreds of thousands of stars by offering developers the tools to build autonomous agents, deploy models locally, and streamline AI-powered workflows.

Let’s look at the most impactful AI repositories trending on GitHub right now, covering what they do, why they matter, and how they fit into the broader AI landscape.

OpenClaw

OpenClaw is the breakout star of 2026 and arguably the fastest-growing open-source project in GitHub history.

Created by PSPDFKit founder Peter Steinberger, it surged from 9,000 to over 60,000 stars in just a few days after going viral in late January 2026, and has since blown past 210,000 stars. The project was originally named Clawdbot, then Moltbot, and finally settled on OpenClaw.

At its core, OpenClaw is a personal AI assistant that runs entirely on your own devices. It operates as a local gateway connecting AI models to over 50 integrations, including WhatsApp, Telegram, Slack, Discord, Signal, and iMessage. Unlike cloud-based assistants, your data never leaves your machine. The assistant is always on, capable of browsing the web, filling out forms, running shell commands, writing and executing code, and controlling smart home devices. What sets it apart from other AI tools is its ability to write its own new skills, effectively extending its own capabilities without manual intervention.

OpenClaw has found use across developer workflow automation, personal productivity management, web scraping, browser automation, and proactive scheduling. On February 14, 2026, Steinberger announced he would be joining OpenAI, and the project would transition to an open-source foundation. Security researchers have raised valid concerns about the broad permissions the agent requires to function, and the skill repository still lacks rigorous vetting for malicious submissions, so users should be mindful of these risks when configuring their instances.

n8n

n8n is an open-source workflow automation platform that combines a visual, no-code interface with the flexibility of custom code, now enhanced with native AI capabilities. It has over 400 integrations and a self-hosted, fair-code license. This gives technical teams full control over their automation pipelines and data.

The platform stands out due to its AI-native approach. Users can incorporate large language models directly into their workflows via LangChain integration. They can build custom AI agent automations alongside traditional API calls, data transformations, and conditional logic. This bridges the gap between conventional business automation tools and cutting-edge AI agent workflows. For enterprises with strict data governance requirements, the self-hosting option is particularly valuable.

Common applications include AI-driven email triage, automated content pipelines, customer support agent flows, data enrichment workflows, and multi-step AI processing chains.

Ollama

In a landscape dominated by cloud API subscriptions, Ollama took the opposite approach. It is a lightweight framework written in Go for running and managing large language models entirely on your own hardware. No data is sent to external services, and the entire experience is designed to work offline.

Ollama provides simple commands to download, run, and serve models locally, supporting Llama, Mistral, Gemma, DeepSeek, and a growing list of others. It includes desktop apps for macOS and Windows, which means even non-developers can get started with local AI. Its partnerships to support open-weight models from major research labs drove a massive surge of interest.

The project has become the backbone of the local AI movement, enabling developers to experiment with and deploy LLMs in privacy-critical or cost-sensitive environments. It pairs quite well with tools like Open WebUI to create a fully self-hosted alternative to commercial AI chat products.

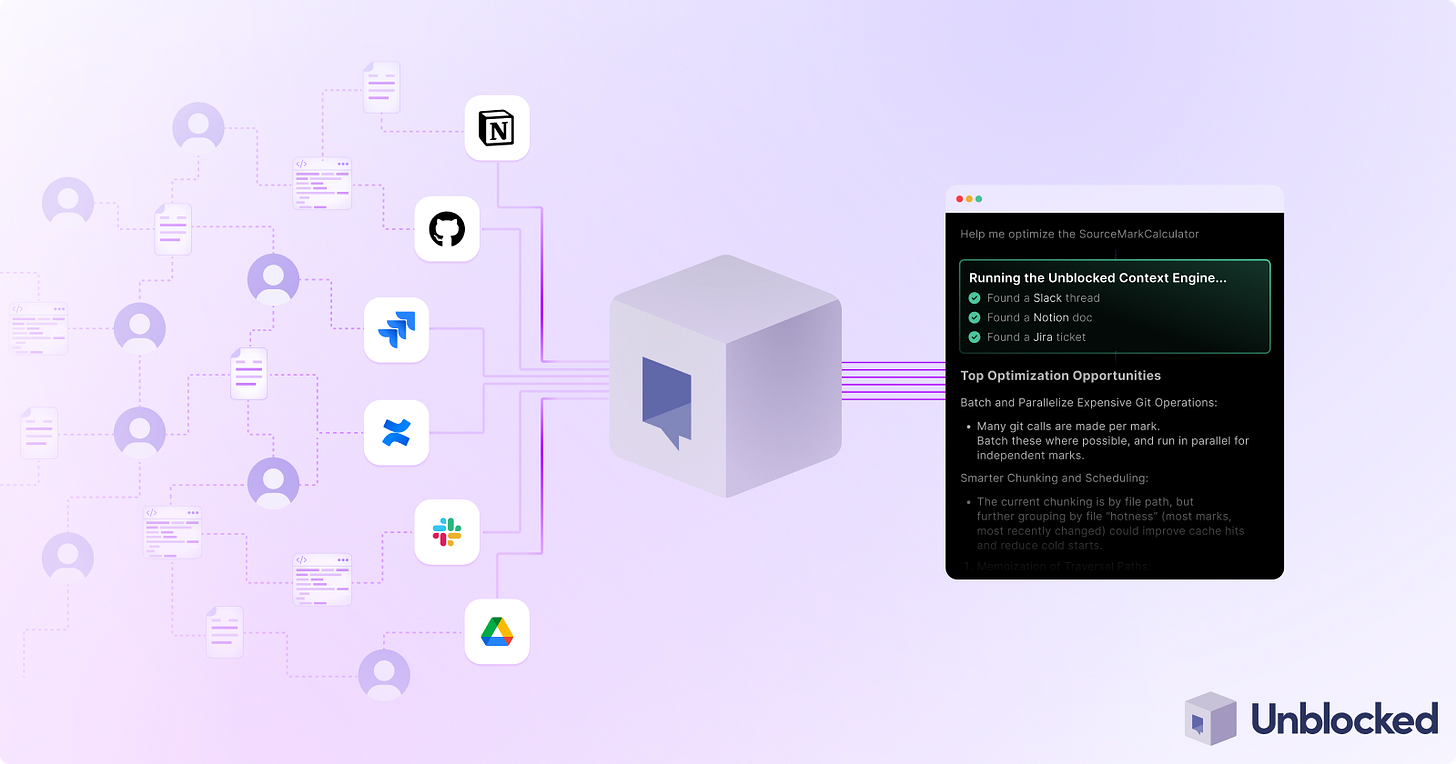

Unblocked: Context that saves you time and tokens (Sponsored)

AI coding tools are fast, capable, and completely context-blind. Even with rules, skills, and MCP connections, they generate code that misses your conventions, ignores past decisions, and breaks patterns. You end up paying for that gap in rework and tokens.

Unblocked changes the economics.

It builds organizational context from your code, PR history, conversations, docs, and runtime signals. It maps relationships across systems, reconciles conflicting information, respects permissions, and surfaces what matters for the task at hand. Instead of guessing, agents operate with the same understanding as experienced engineers.

You can:

Generate plans, code, and reviews that reflect how your system actually works

Reduce costly retrieval loops and tool calls by providing better context up front

Spend less time correcting outputs for code that should have been right in the first place

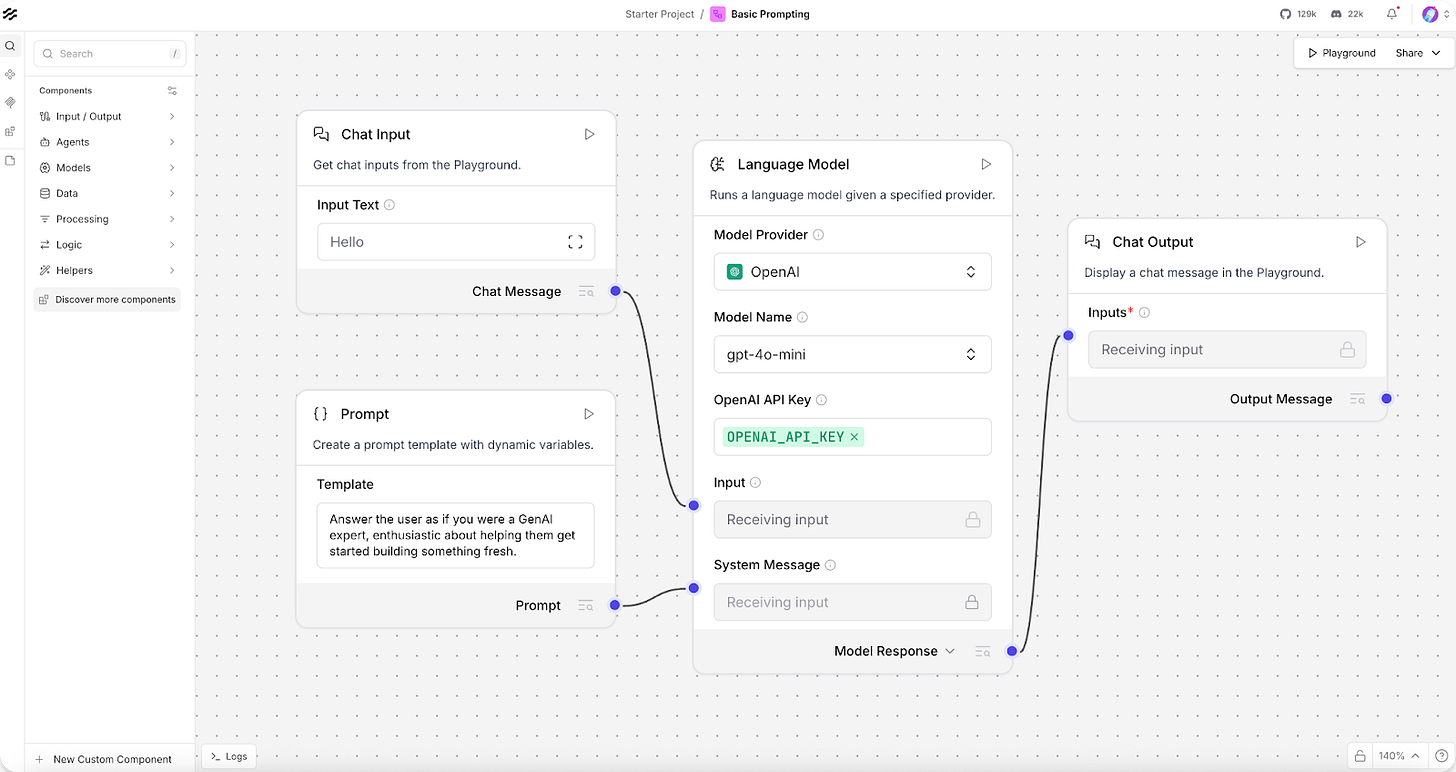

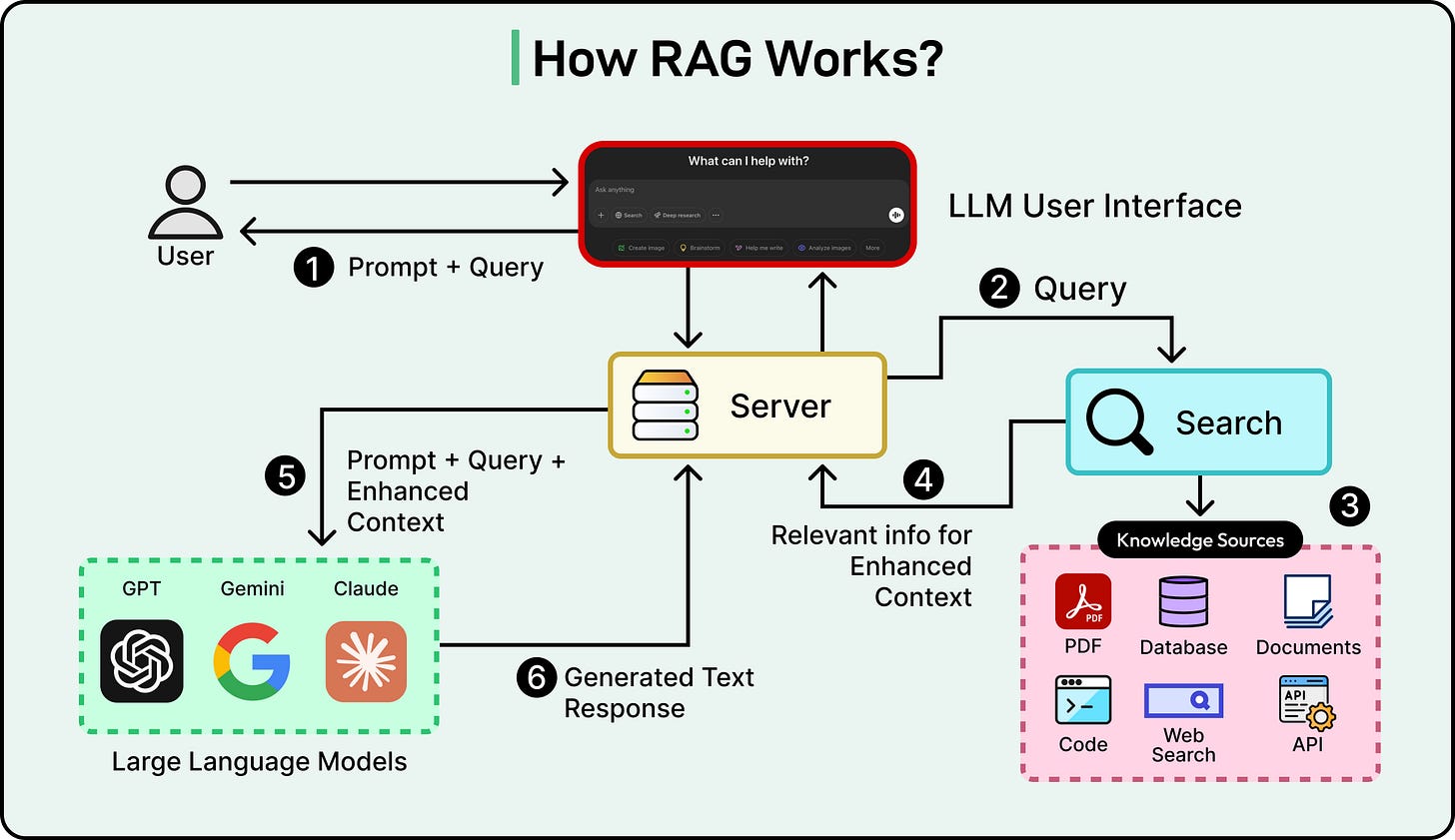

Langflow

Langflow is a low-code platform for designing and deploying AI-powered agents and retrieval-augmented generation (RAG) workflows, built on top of the LangChain framework. It provides a drag-and-drop interface for constructing chains of prompts, tools, memory modules, and data sources, with support for all major LLMs and vector databases.

Developers can visually orchestrate multi-agent conversations, manage memory and retrieval layers, and then deploy those flows as APIs or standalone applications. This eliminates the need for extensive backend engineering when prototyping complex AI pipelines. What used to take weeks of coding can often be assembled in an afternoon.

Langflow has attracted a significant community of data scientists and engineers. Its most common use cases include RAG pipeline prototyping, multi-agent conversation design, custom chatbot creation, and rapid LLM application development.

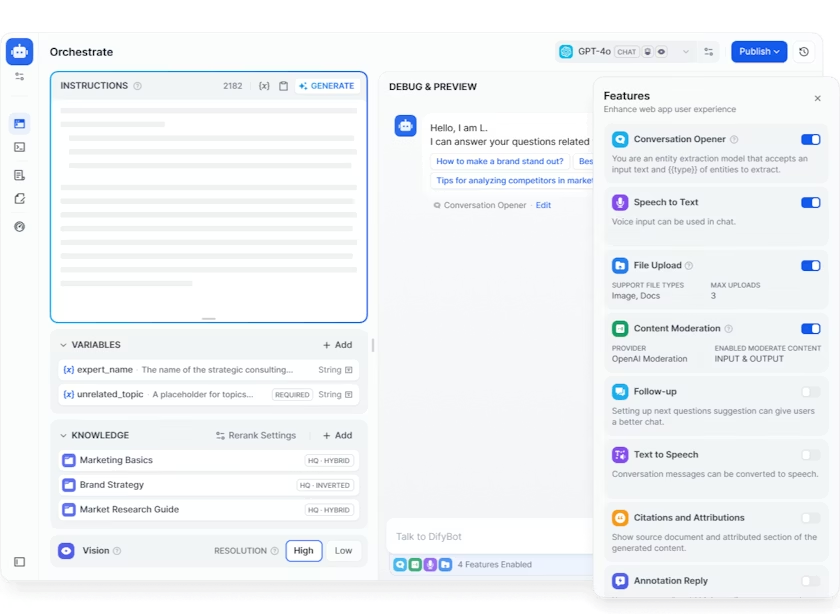

Dify

Dify is a production-ready platform for agentic workflow development, offering an all-in-one toolchain to build, deploy, and manage AI applications. Written primarily in TypeScript, it handles everything from enterprise QA bots to AI-driven custom assistants.

The platform includes a workflow builder for defining tool-using agents, built-in RAG pipeline management, support for multiple AI model providers, including OpenAI, Anthropic, and various open-source LLMs, usage monitoring, and both local and cloud deployment options. It also supports Model Context Protocol (MCP) integration. Dify handles the infrastructure boilerplate so teams can focus on crafting their agent logic.

Dify fills a crucial gap for teams that want to stand up AI-powered services quickly under an open-source, self-hostable framework. Its use cases span enterprise chatbot deployment, AI-powered internal tools, customer support automation, and multi-model orchestration

LangChain

LangChain has cemented its position as the foundational framework for building reliable AI agents in Python. It provides modular components for chains, agents, memory, retrieval, tool use, and multi-agent orchestration. Its companion project, LangGraph, extends this further with support for complex, stateful agent workflows that include cycles and conditional branching.

Many of the other projects on this list build on top of LangChain or integrate with it directly, making it the connective tissue of the AI agent ecosystem. It has great support from Anthropic, OpenAI, Google, and every major model provider.

Developers use LangChain for building multi-agent systems, tool-using AI agents, RAG pipelines, conversational AI applications, and structured data extraction.

Open WebUI

Open WebUI is a self-hosted AI platform designed to operate entirely offline, with over 282 million downloads and 124k+ stars. It provides a polished, ChatGPT-style web interface that connects to Ollama, OpenAI-compatible APIs, and other LLM runners, all installable from a single pip command.

The feature set is extensive. It includes a built-in inference engine for RAG, hands-free voice and video call capabilities with multiple speech-to-text and text-to-speech providers, a model builder for creating custom agents, native Python function calling, and persistent artifact storage. For enterprise users, it offers SSO, role-based access control, and audit logs. A community marketplace of prompts, tools, and functions makes extending the platform straightforward.

If Ollama provides the engine for running local models, Open WebUI provides the interface. Together, they form a popular self-hosted AI stack. Its primary use cases include private ChatGPT replacements, multi-model comparison setups, team AI platforms, and RAG-powered document question-answering systems.

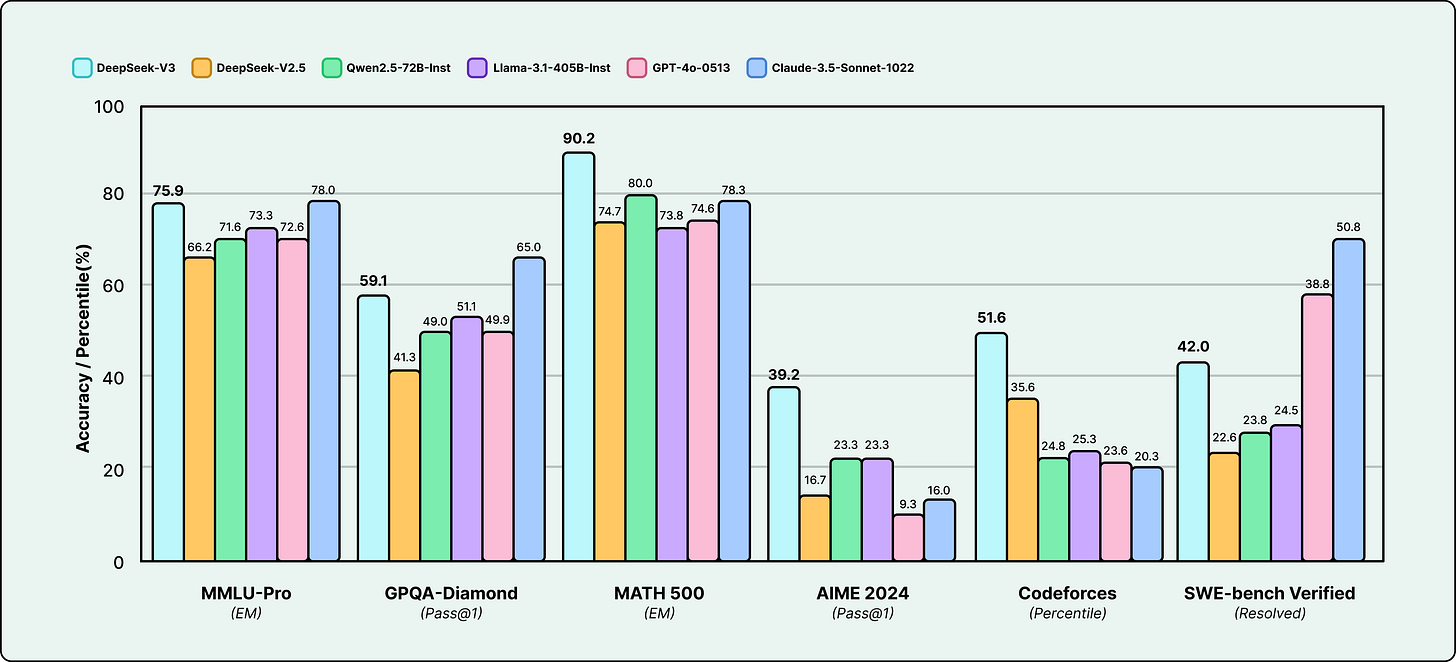

DeepSeek-V3

DeepSeek-V3 stunned the AI community by delivering benchmark results that rival frontier closed models like GPT-4, as a fully open-weight release.

Built on a Mixture-of-Experts (MoE) architecture, it is optimized for general-purpose reasoning and supports ultra-long 128K token contexts. The team introduced novel training techniques, including distilled reasoning chains, that set new standards in the open model community.

DeepSeek-V3 proved that the open-source community can produce models competitive with the best proprietary offerings. This has significant implications for developers who want high-capability AI without vendor lock-in, recurring API costs, or data privacy concerns. The model is available for free commercial use and can be fine-tuned for domain-specific applications. It runs locally via Ollama and has become a popular choice for powering custom AI agents and enterprise chatbots.

Google Gemini CLI

Gemini CLI is Google’s open-source contribution to the agentic coding space, bringing the Gemini multimodal model directly into developers’ terminals.

With a simple npx command, developers can chat with, instruct, and automate tasks using the Gemini model from the command line. It supports code assistance, natural language queries, integration with Google Cloud services, and can be embedded into scripts and CI/CD pipelines.

Basically, the tool abstracts away the complexity of API integration, making frontier AI capabilities immediately accessible from any terminal environment. Common uses include AI-assisted coding, command-line automation, batch file processing, and rapid prototyping.

RAGFlow

RAGFlow is an open-source retrieval-augmented generation engine that combines advanced RAG techniques with agentic capabilities to create a robust context layer for LLMs.

The platform provides an end-to-end framework covering document ingestion, vector indexing, query planning, and tool-using agents that can invoke external APIs beyond simple text retrieval. It also supports citation tracking and multi-step reasoning, which are critical for enterprise applications where answer traceability matters.

As organizations move beyond basic chatbots toward production AI systems, RAGFlow addresses the hardest challenge: making AI answers grounded, traceable, and reliable. With 70k+ stars, it has become a key infrastructure component for enterprise knowledge bases, compliance-focused AI, research assistants, and multi-source data analysis workflows [10].

Claude Code

Claude Code is Anthropic’s agentic coding tool that operates from the terminal. Once installed, it understands the full codebase context and executes developer commands via natural language. You can ask it to refactor functions, explain files, generate unit tests, handle git operations, and carry out complex multi-file changes, all guided by conversation.

Claude Code distinguishes itself from simpler code completion tools via its ability to reason about an entire project, execute multi-step tasks, and maintain context across long coding sessions. It operates as an AI pair programmer that is deeply aware of your project structure and can act on code independently.

Its primary applications include full-codebase refactoring, automated test generation, code review and explanation, and git workflow automation.

Key Trends Shaping This Landscape

Some common trends are shaping this landscape:

The Local AI Revolution

Ollama, Open WebUI, and OpenClaw collectively signal a massive shift: developers want to run AI on their own hardware.

Privacy concerns, API costs, and the desire for deep customization are driving this movement. The infrastructure for self-hosted AI has matured to the point where a single command can spin up a full-featured AI platform.

Agentic AI Goes Mainstream

Nearly every repository on this list incorporates some form of autonomous agent behavior.

We have moved from AI that responds to AI that acts. Agents can now browse the web, execute code, manage files, orchestrate multi-step workflows, and even improve their own capabilities, all running continuously on your machine.

Open Models Close the Gap

DeepSeek-V3’s success proved that open-weight models can compete with the best proprietary offerings.

Combined with efficient local runtimes like Ollama, this means developers can build high-capability applications without any API dependency. This trend is reshaping the economics of AI development fundamentally.

The Rise of Visual AI Building

Langflow, Dify, and n8n show that drag-and-drop visual interfaces are becoming the preferred way to design AI agent pipelines.

This means domain experts, not just ML engineers, can create sophisticated AI applications. The barrier to entry for building production AI has never been lower.

Conclusion

The repositories profiled here are more than trending projects. They are the building blocks of a new AI infrastructure stack.

For developers, the takeaway is clear. Whether you are building an AI-powered product, automating internal workflows, or experimenting with frontier models, the tools in this list represent the most battle-tested, community-validated starting points available. Keep them on your radar. The pace of innovation in this space shows no signs of slowing down.

References:

OpenClaw - github.com/openclaw/openclaw

n8n - github.com/n8n-io/n8n

Ollama - github.com/ollama/ollama

Langflow - github.com/langflow-ai/langflow

Dify - github.com/langgenius/dify

LangChain - github.com/langchain-ai/langchain

Open WebUI - github.com/open-webui/open-webui

DeepSeek-V3 - github.com/deepseek-ai/DeepSeek-V3

Google Gemini CLI - github.com/google-gemini/gemini-cli

RAGFlow - github.com/infiniflow/ragflow

Claude Code - github.com/anthropics/claude-code

Surprised vLLM didn't make the cut!

vLLM (https://github.com/vllm-project/vllm) was one of the fastest growing open source projects by contributors! https://github.blog/news-insights/octoverse/octoverse-a-new-developer-joins-github-every-second-as-ai-leads-typescript-to-1/

Surprisingly no one spoke about ZeroClaw. It’s a great alternative to OpenClaw except that it’s smaller in footprint.